This post provides a high level overview of the Enterprise Hybrid Cloud 4.x ‘Hybrid Management Model’, which is the default model used for VxBlock & VxRack EHC solutions.

The following DellEMC acronyms are used throughout this post:

EHC = Enterprise Hybrid Cloud

CPSD = Converged Platforms & Solutions Division

AMP = Advanced Management Pod

ESSM = EMC Simple Support Matrix

RCM = Release Certification Matrix

vCSA = vCenter Server Appliance

CI = Converged Infrastructure

HCI = Hyper Converged Infrastructure

LCS = Logical Configuration Survey

The images used in this post were taken from the EHC Architecture Guides, for more detailed architectural explanations please refer to the following EHC 4.1 Architecture Guides:

EHC Reference Architecture Guide

EHC Concepts and Architecture Guide

In the CPSD space the CI/HCI EHC management model is referred to as the ‘EHC Hybrid Management Model’, we refer to it as the ‘Hybrid’ model due to the fact we overlay some EHC management functionality with already preexisting VxBlock/VxRack management functions. The following is a list of the CI/HCI Platforms that support the ‘EHC Hybrid Management Model’ :

- VxBlock 340 (Core Storage = VNX2)

- VxBlock 540 (Core Storage = XtremIO)

- VxBlock 740 (Core Storage = VMAX3)

- VxRack FLEX (Core Storage = ScaleIO)

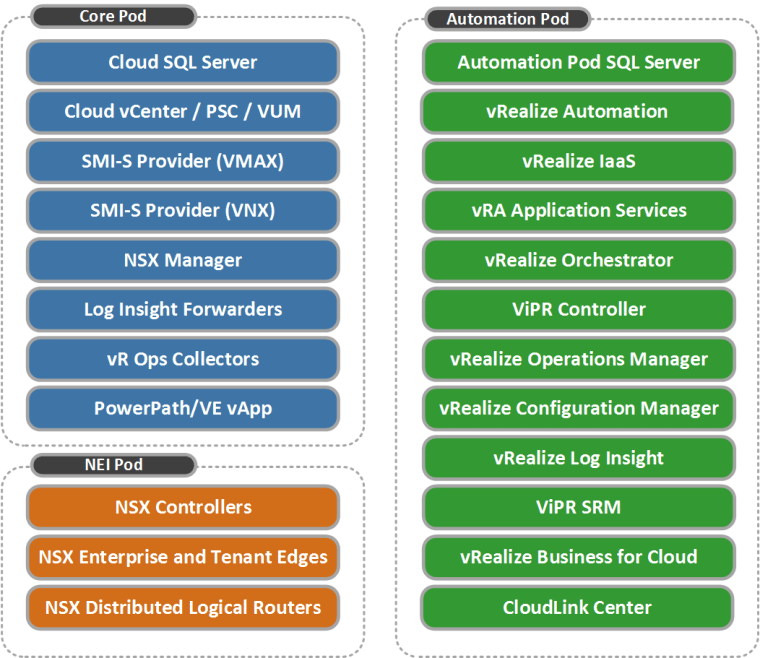

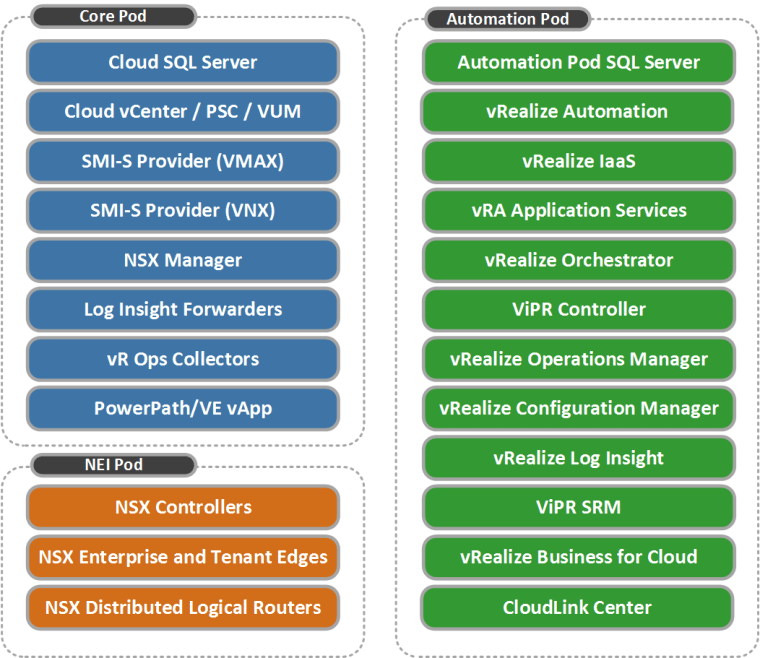

EHC Solution Components

The following image represents the EHC solution components and their respective management Pods (Core|NEI|Automation):

The VxBlock/VxRack ‘EHC Hybrid Management Model’ correlates with the above and comprises of these three PODs including the AMP which essentially becomes the Core Pod:

- Core Pod (VxBlock AMP / VxRack Controllers)

- Automation Pod

- NEI Pod

Note: Pod does not imply ESXi Cluster, as you will see below some of the NEI and Automation Pod components live in the AMP(VxBlock)/Controller(VxRack) Cluster. In the case of a VxBlock we refer to the AMP as the management platform, the equivalent management platform in VxRack is referred to as the VxRack Controllers Cluster.

EHC AMP Choices

For the VxBlock AMP we have 2 choices for an EHC solution:

1. The legacy ‘AMP-2HAP’ which can be used in brownfield scenarios:

‘AMP-2HAP’ – This is the Highly Available Performance AMP which includes three Cisco UCS C220|240 servers and additional ‘FAST VP’ VNXe3200 storage (more detail on AMP-2 Here).

2. The recently redesigned, scalable and powerful AMP ‘AMP-2S’ comprises of the following component updates:

- Cisco UCS C220M4 1U SFF series ESXi hosts, default qty is 3 (which the EHC Core Pod requires at a minimum) and can be expanded to 12 hosts if required. The C220 M4 is powered by a 2-socket Xeon E5-2600 processor and 128GB of RAM per server, which can be expanded to 384GB if required.

- vCSA as the default vCenter Server configuration (Windows vCenter version is optional).

- vCenter Update Manager and embedded MS SQL Server 2012 Database for VUM.

- VNXe 3200 shared storage with a default capacity of ~8TB, with the potential to scale to VNXe 3200 maximums of 150 drives ~500TB.

The VxRack AMP (Controller Cluster) consists of the following:

- VMware vSAN for the AMP Controllers storage (ScaleIO is used for the Tenant cluster production Storage).

- A 3-node ESXi cluster is the default for the VxRack AMP Controller Cluster, but when used with EHC there must be at least 4x ESXi Hosts as a requirement for the AMP Controller Cluster. The 4th ESXi host is to ensure storage can suffer the loss of a cluster node without compromising storage integrity, has written an excellent post here on reasons why 4-node configurations offer greater availability.

- vCSA is the standard vCenter Server configuration.

- vCenter Update Manager and embedded MS SQL Server 2012 Database for VUM.

- Cisco Nexus 3K Management Switches.

Please work with your local vArchitect&vSpecialist in order to size your EHC solution specific to your requirements.

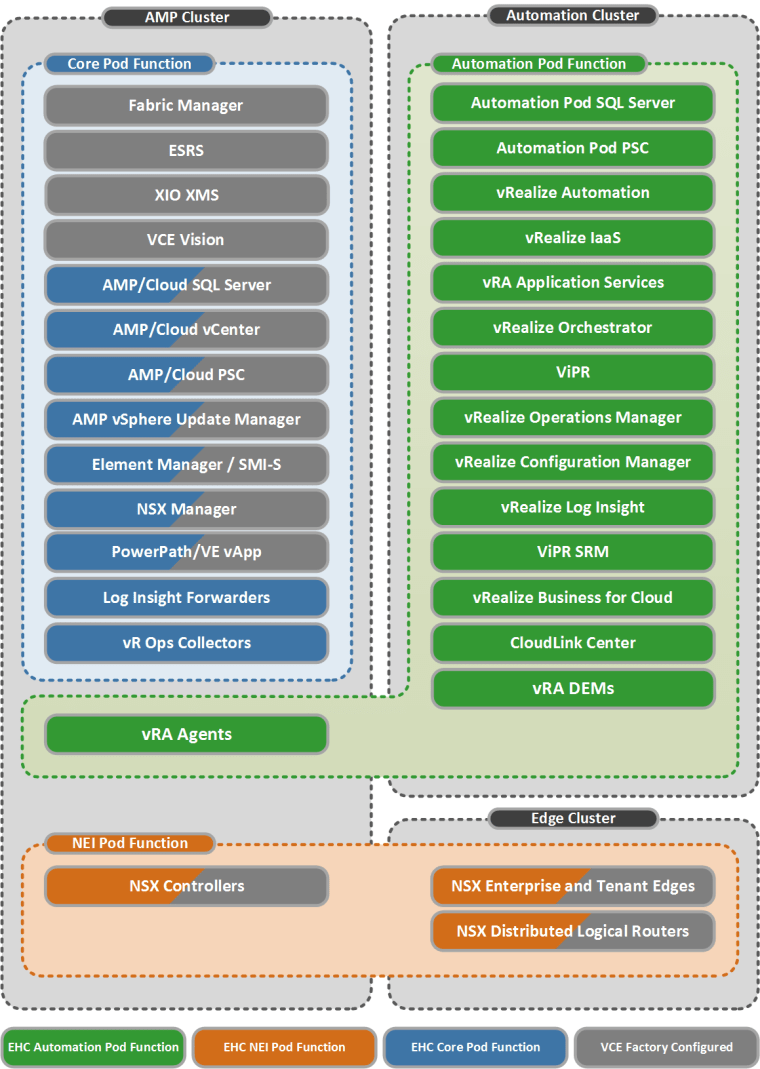

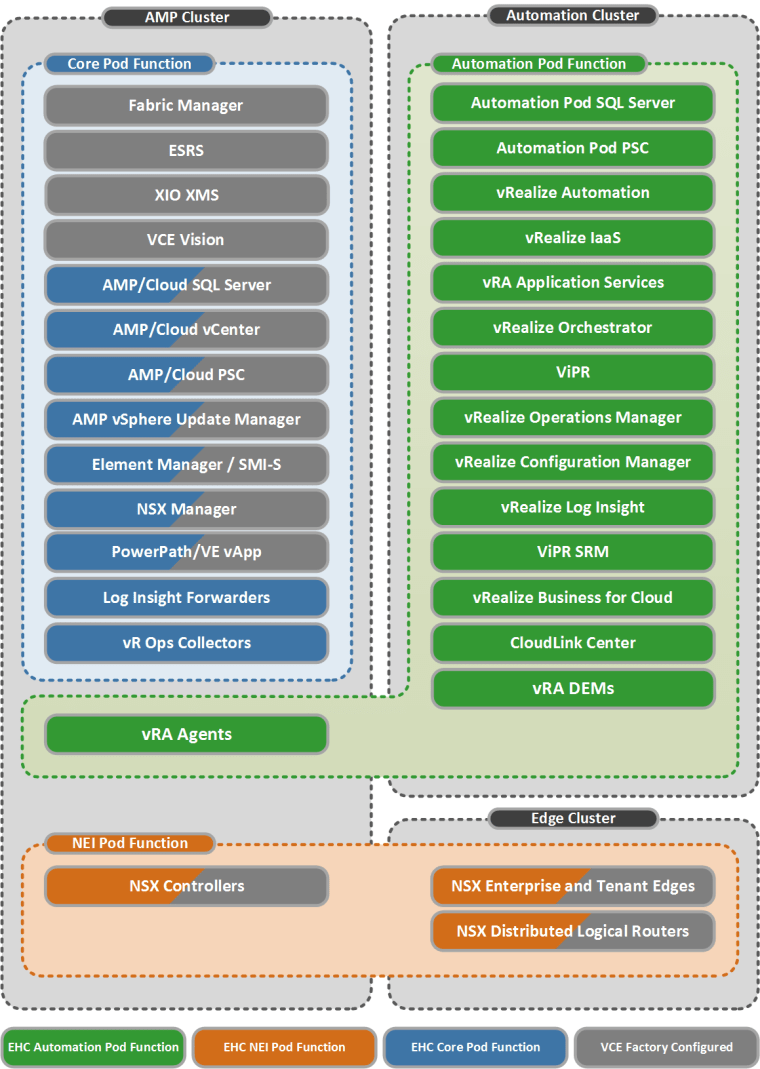

EHC Core Pod Function

The ‘EHC Hybrid Management Model’ includes a single vCenter by default and utilises the resources and components available in the VxBlock/VxRack AMP to include and overlay the EHC Core Pod components. This allows for the efficient use of available hardware and software resources already available in the AMP, eliminating the requirement for a dedicated Core Pod cluster. The single cloud vCenter in the AMP will manage the three associated management pods Core(AMP), Auto and NEI in addition to the Tenant ESXi clusters.

The figure below depicts how the EHC Core Pod components overlay with the AMP management VM’s residing on the AMP Cluster in a VxBlock/VxRack solution, the overlay of Core Pod management functions is depicted by the boxes shaded blue&grey. Log Insight Forwarders & vR Ops Collectors are also located in the AMP as part of the Core Pod functionality.

EHC NEI Pod Function

The NSX Controllers & Manager which are part of NEI Pod functionality also reside in the AMP and are depicted by the boxes shaded orange&grey. The Edge Cluster accommodates the required NSX Edge&DLR devices and completes the NEI Pod requirements, this cluster requires a minimum of 4x ESXi hosts in order to meet VMware best practice for host failure scenarios.

Here is an example diagram of the VxBlock/VxRack ‘EHC Hybrid Management Model’ architecture as it applies to a single site EHC +NSX solution (Source: EHC Reference Architecture Guide):

EHC Automation Pod Function

The ESXi cluster supporting the Automation Cluster must have at least 3x ESXi Hosts, this is a requirement in order to meet ViPR best practices for ViPR node separation. The Automation Cluster may comprise of Cisco B-Series blades or C-Series rack mount hosts, but not a mix of each. You will notice from the above figure, that while the majority of the Automation Pod components are located in the Automation Cluster, the two IaaS vRA Agent VMs’ (EHC Automation Pod functions) reside in the VxBlock/VxRack AMP Cluster, the reason why they are located on the AMP is for co-locality with the Cloud vCenter they connect to. In addition to the 2 Core Pod PSC appliances installed in the AMP, we also have an Automation Pod PSC which services the Automation Pod management components authentication requirements.

RCM|ESSM

EHC 4.x ESSM aligns with the VxBlock Release Certification Matrix (RCM) 6.0.x and VxRack 3.0.x RCM, for more specific details please refer to the respective EHC ESSM which can be found at support.emc.com

EHC Factory Builds

As part of the VxBlock/VxRack factory process the following are some of the high level configurations that are catered for by the CPSD Logical Engineering teams based on a customer LCS:

- Cisco MDS&Nexus Switches, Cisco UCS, Dell PowerEdge, ESXi Clusters and Storage arrays are configured, as per the customer specified LCS (VLAN’s,VSAN’s,IP Ranges, Host Names, Zoning etc..).

- The three EHC management clusters are installed and configured, including vSphere HA and DRS.

- Install and configure components as per the supported EHC RCM&ESSM.

- AMP Cluster components deployed and configured, including vCenter vCSA & PSC appliances.

- Automation & Core Pod components deployed as per the LCS, based on a Small|Medium|Large (1,000|5,000|10,000 VM’s) EHC solution.

- NEI Pod components deployed and configured, including additional Edge service gateways & DLR’s required for the EHC Automation Pod as per the LCS.

- Data protection components configured – VPLEX, RecoverPoint, Avamar, Data Domain.

Useful EHC References

EHC 4.1 Reference Architecture Guide

EHC 4.1 Concepts & Architecture Guide

EHC 4.1 Administration Guide

EHC 4.1 Infrastructure & Operations Management Guide

EHC 4.1 Security Management Solution Guide