This is the third post in a series of posts covering VCF On VxRail 4.2. The previous posts detailed an example walkthrough covering the process of:

- Deploying a VxRail Management Workload Domain cluster

- Deploying VMware CloudBuilder for VxRail

- Bring-Up of VCF 4.2

- Deploying a VI WLD

Versions used in this example are VCF 4.2 & VxRail 7.0.131. Release Notes:

The end result of this example build is that of a single site VCF on VxRail solution including a single NSX-T VI Workload Domain (thanks to @HeagaSteve for the architectural diagram):

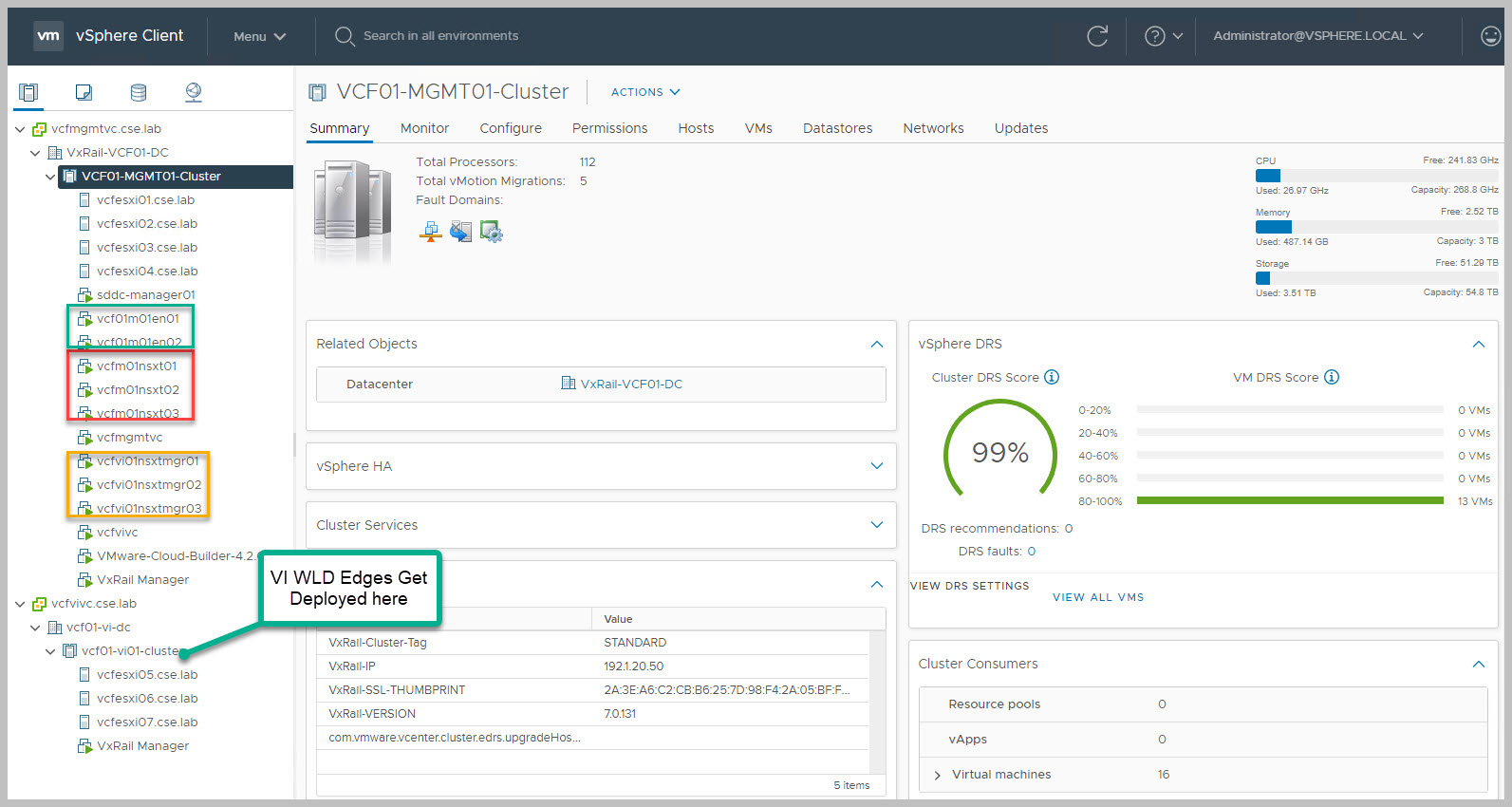

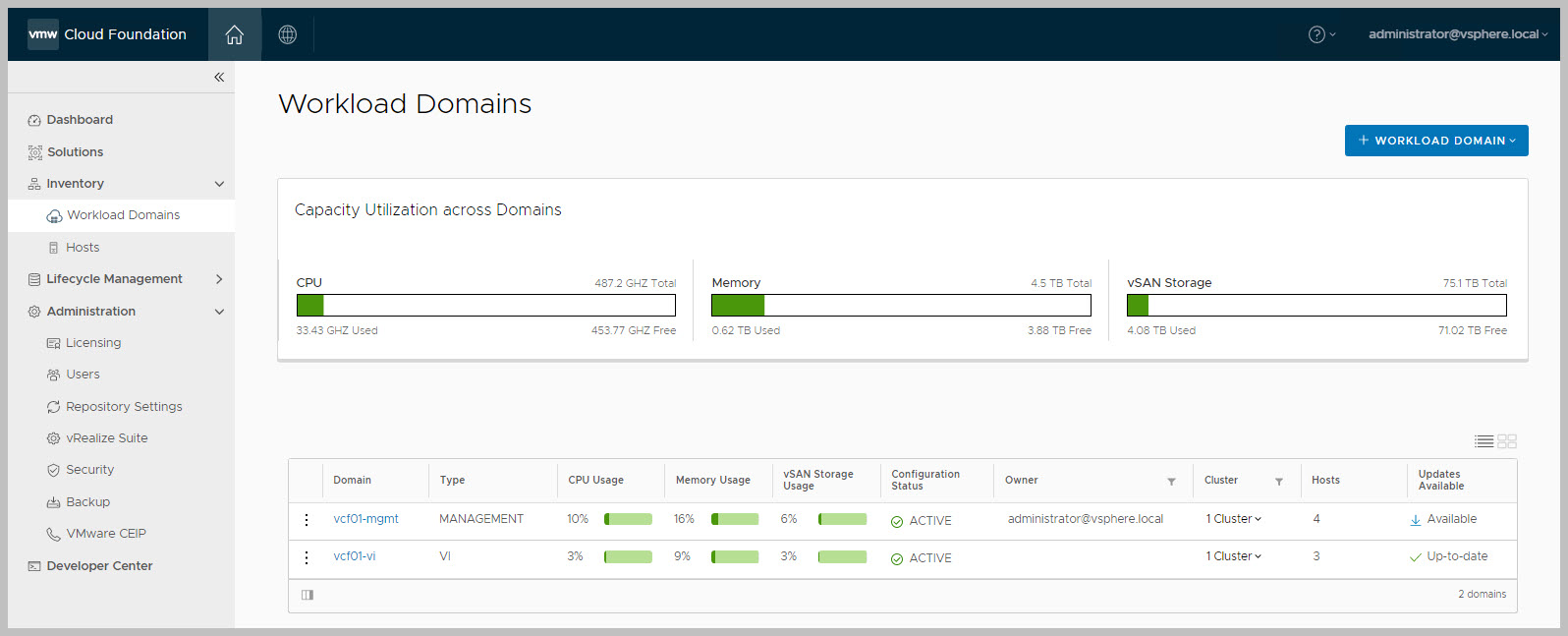

Since the release of VCF 4.0 NSX-T is now used exclusively across both the Mgmt and VI Workload Domains (WLDs). As per previous releases the Management WLD cluster requires a minimum of 4 nodes and it is worth noting that 3x NSX-T manager appliances for all WLDs get deployed here as can be seen from the above diagram. As part of the Mgmt WLD deployment Geneve backed Application Virtual Networks (AVNs) leveraging BGP peering are deployed and configured along with the required 2x NSX-T Edge nodes in an active/active state to the Mgmt WLD.

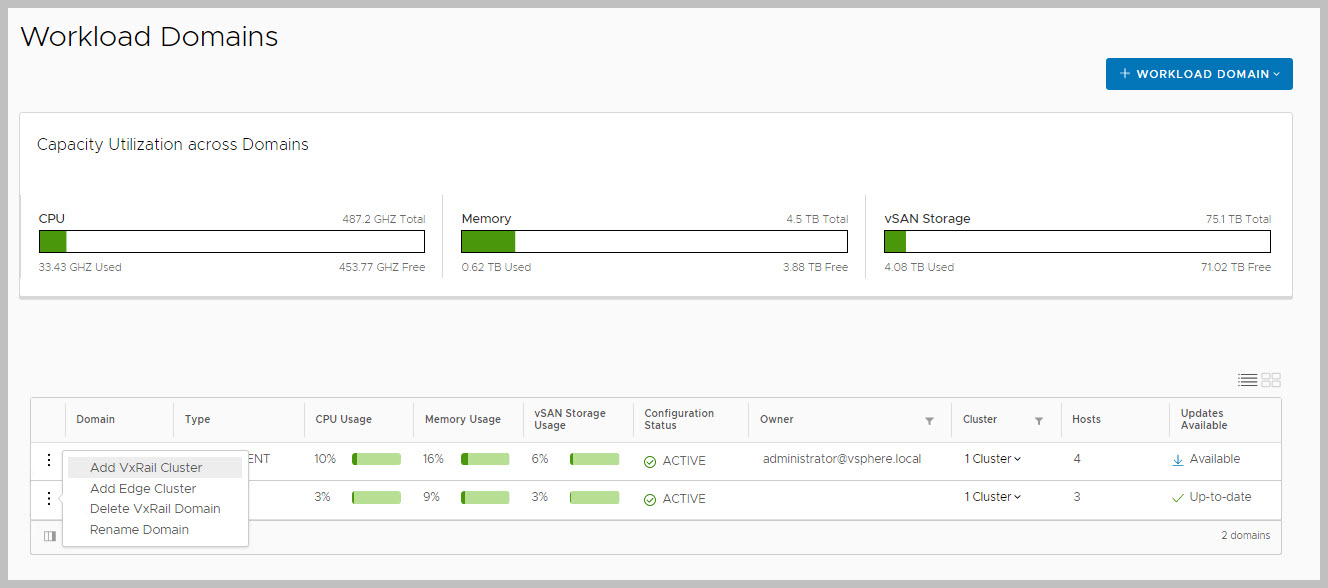

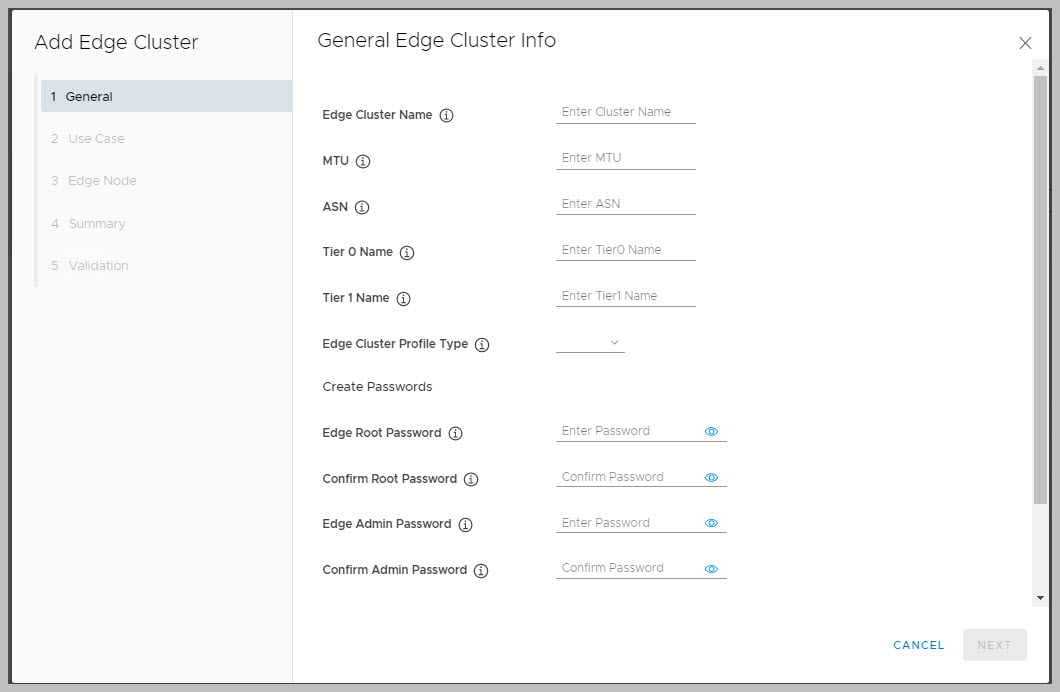

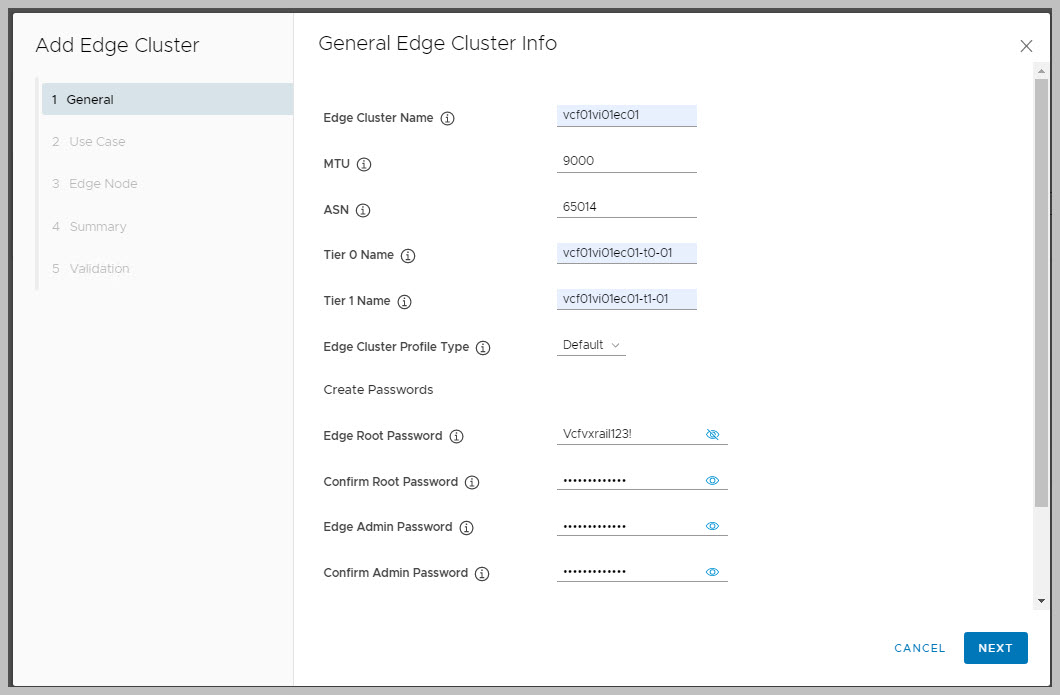

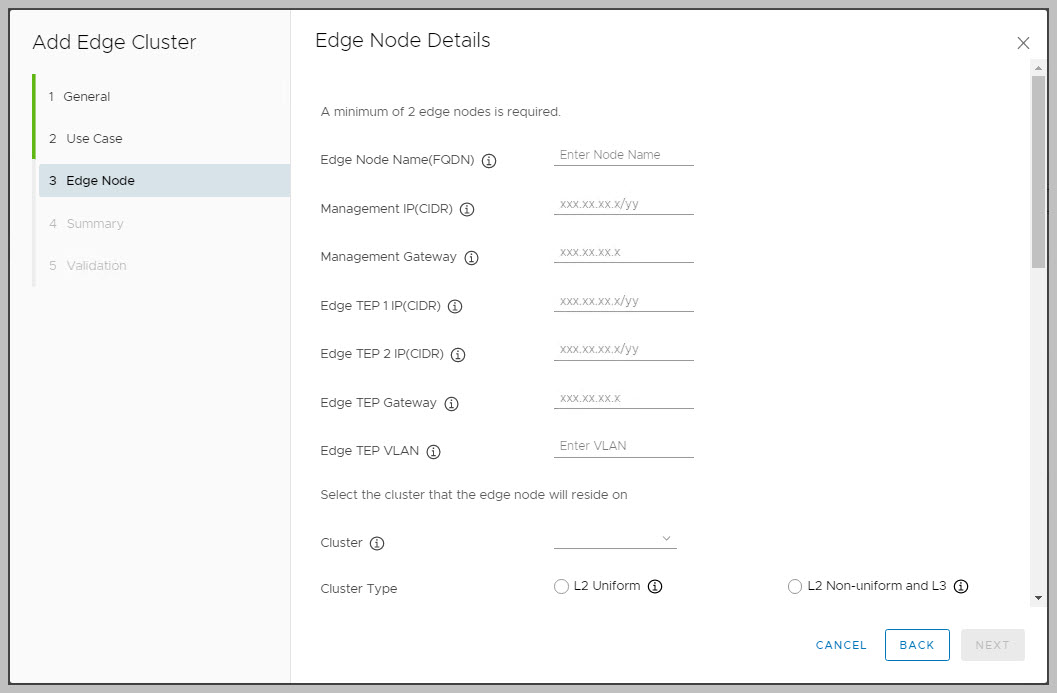

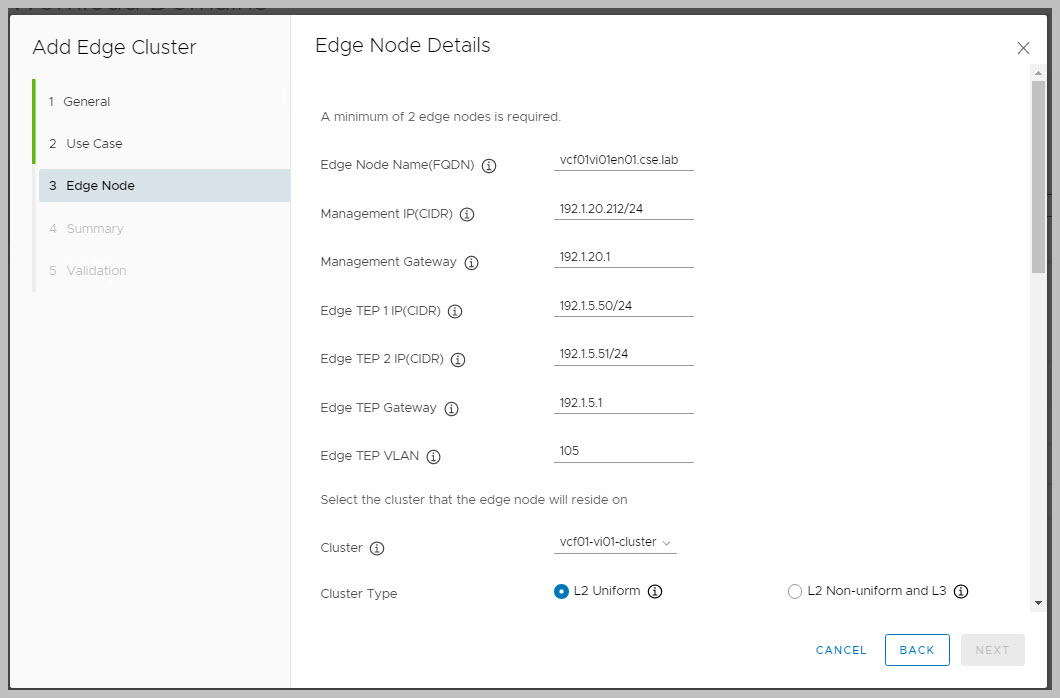

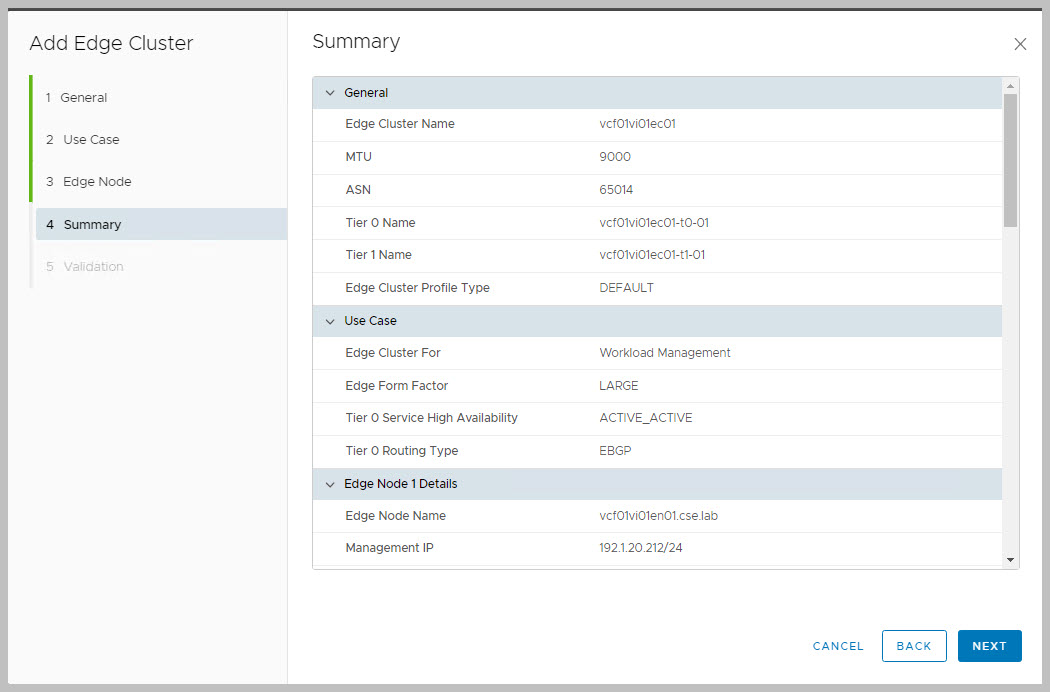

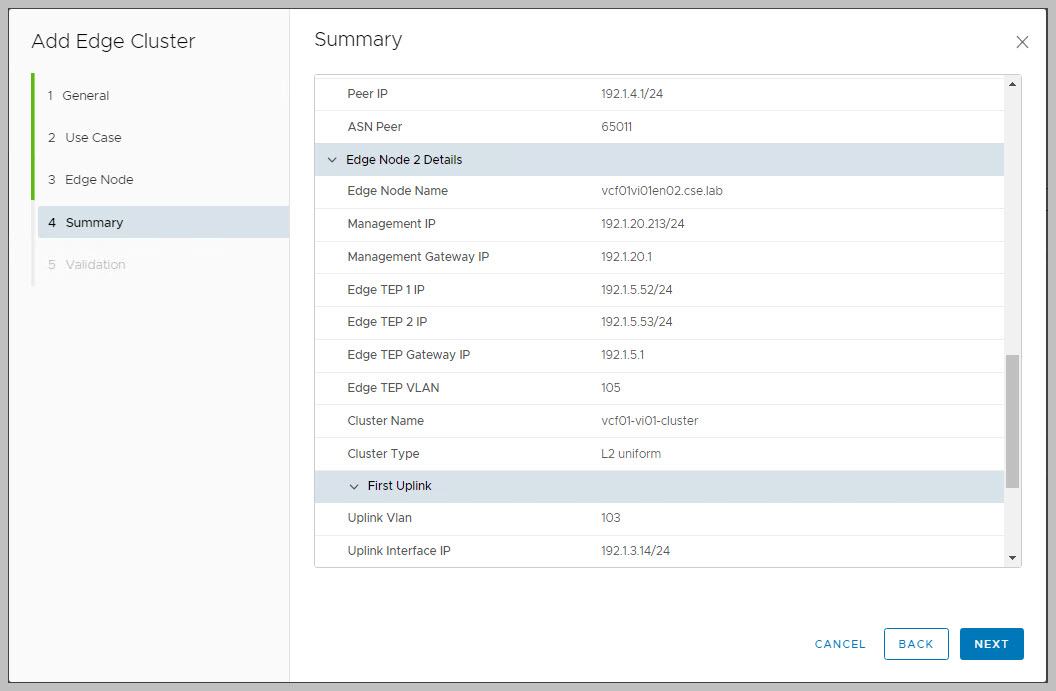

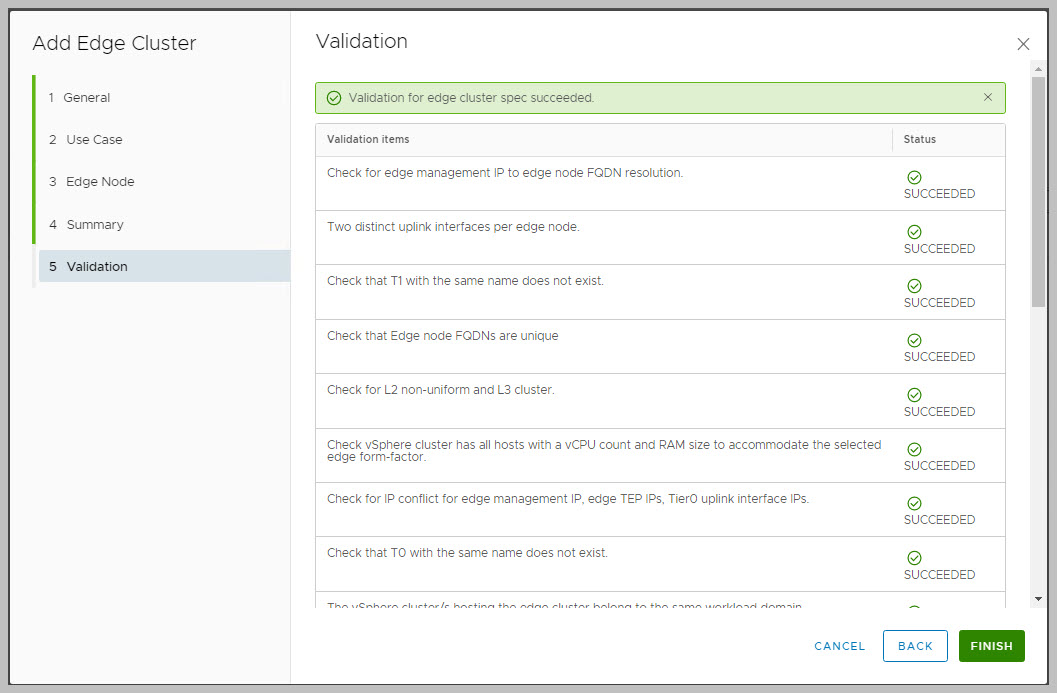

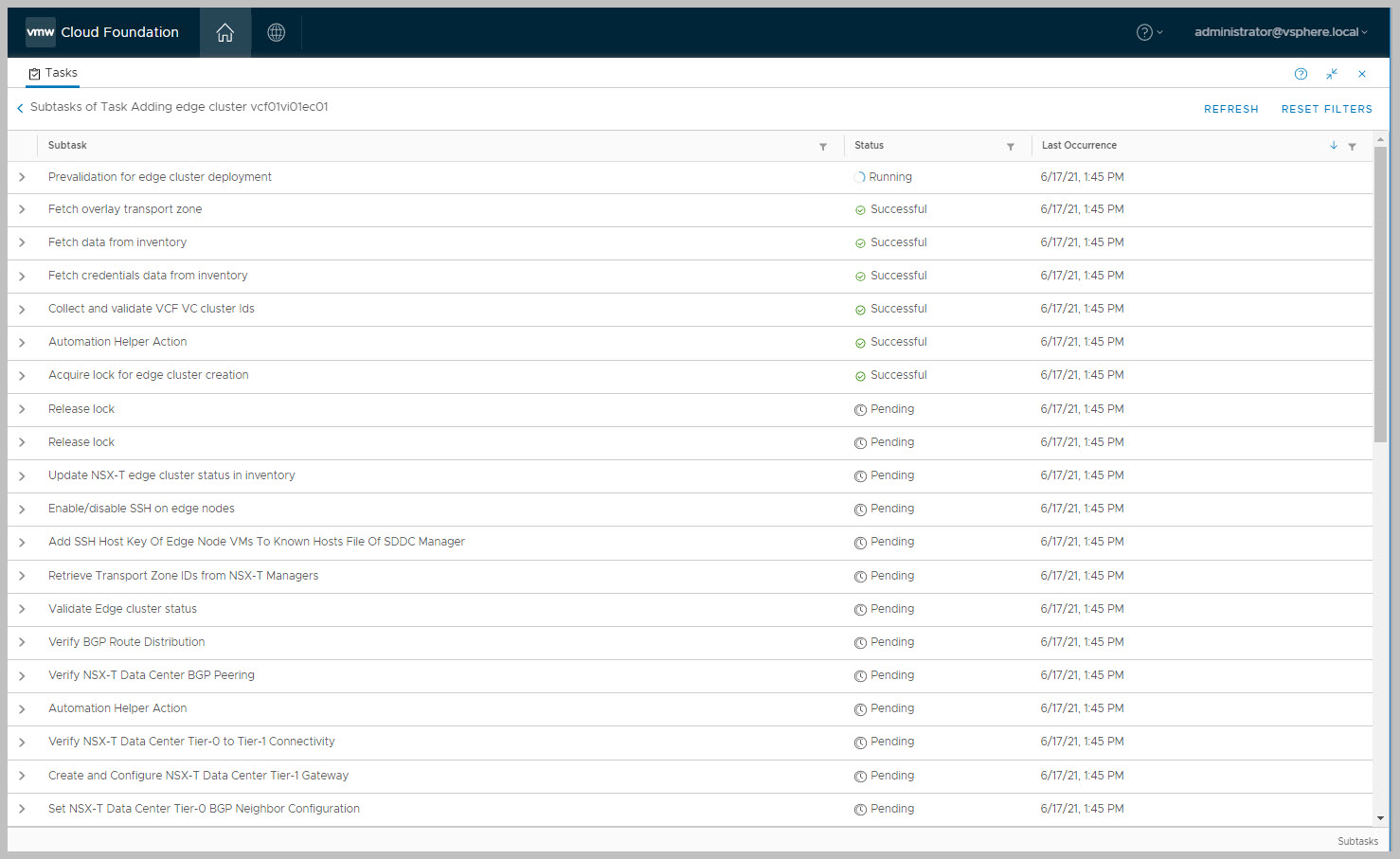

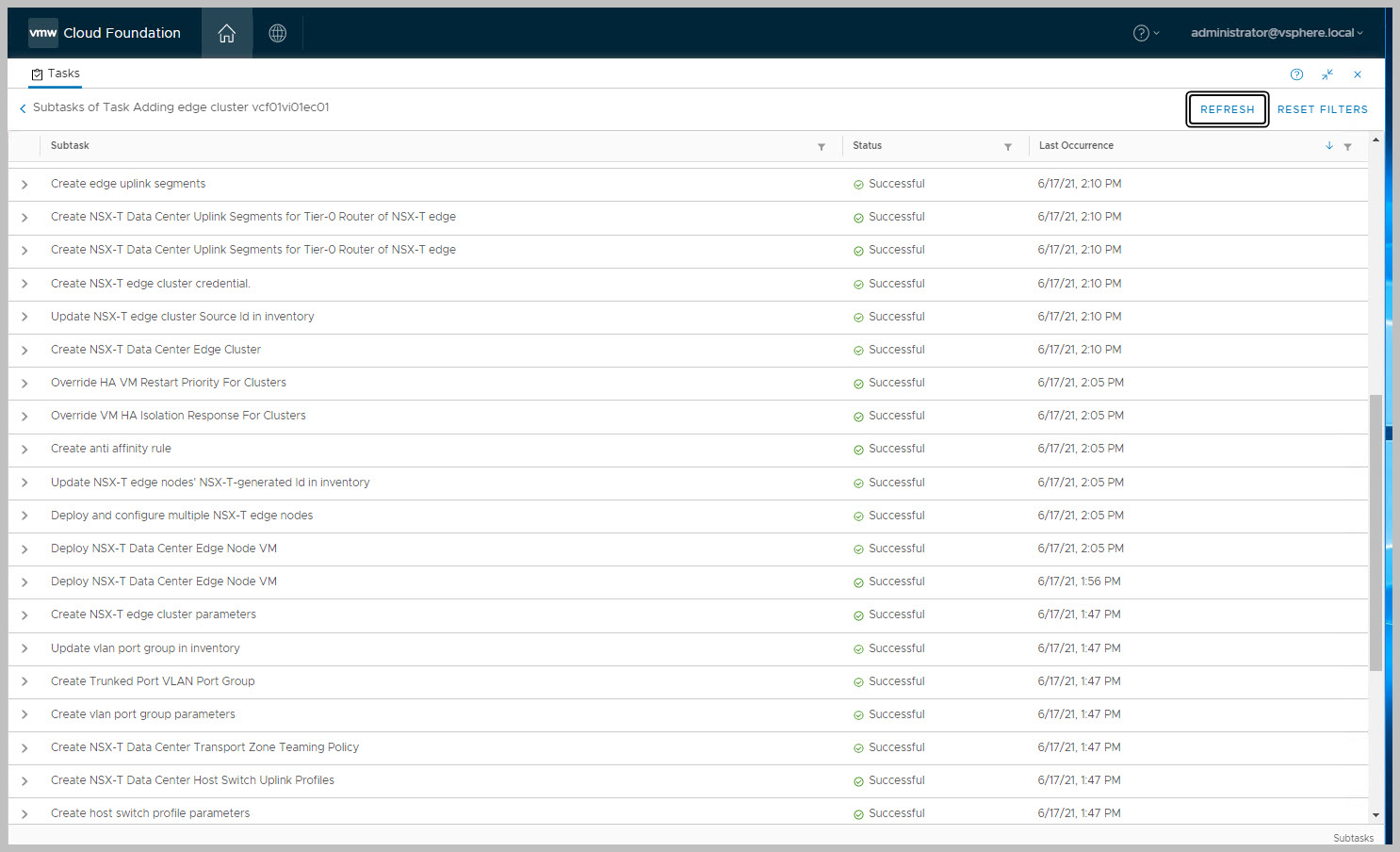

But what about VI WLDs Edge deployments you may ask?: By default during a VI WLD creation only the 3x NSX-T managers get deployed and reside in the Management WLD cluster, no NSX-T Edge clusters get deployed as part of this process. As of VCF On VxRail 4.0 SDDC Manager now has the capability built-in to automatically deploy NSX-T Edge clusters to a workload domain to provide routing and network services as per VMware VVD Edge design. It is worth noting an NSX-T Edge cluster does not have a one-to-one relationship with a vSphere cluster and a vSphere cluster can run multiple NSX-T Edge clusters. The following steps detail how to deploy an NSX-T Edge cluster leveraging SDDC Manager automation (Please also see below for an excellent DEMO provided by Cathal Prendeville).

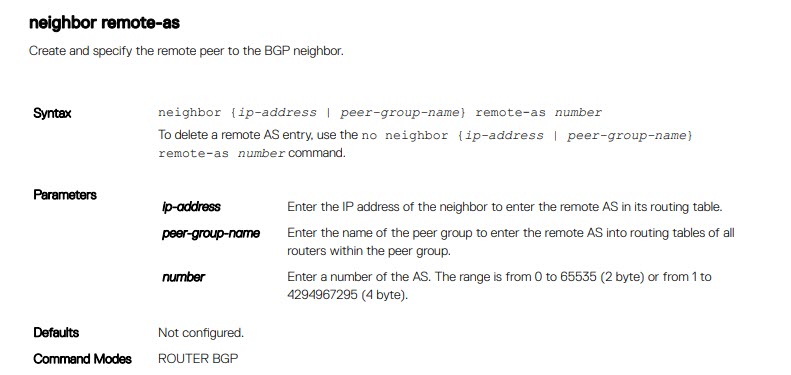

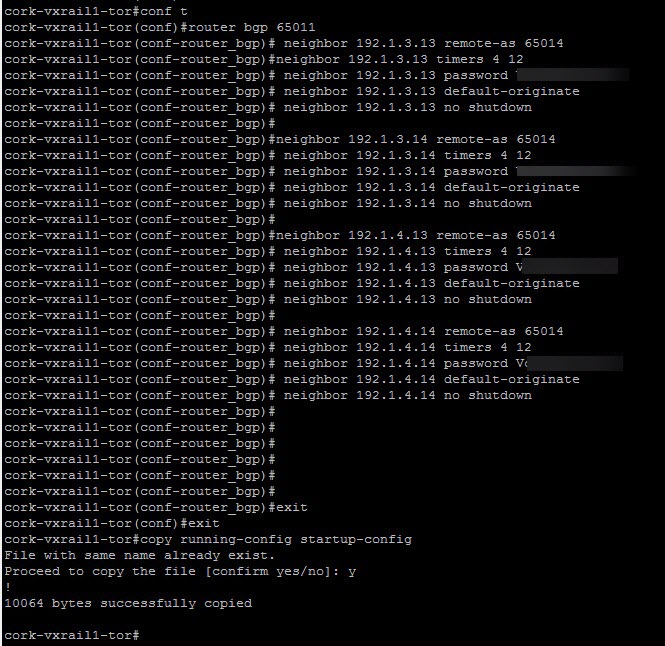

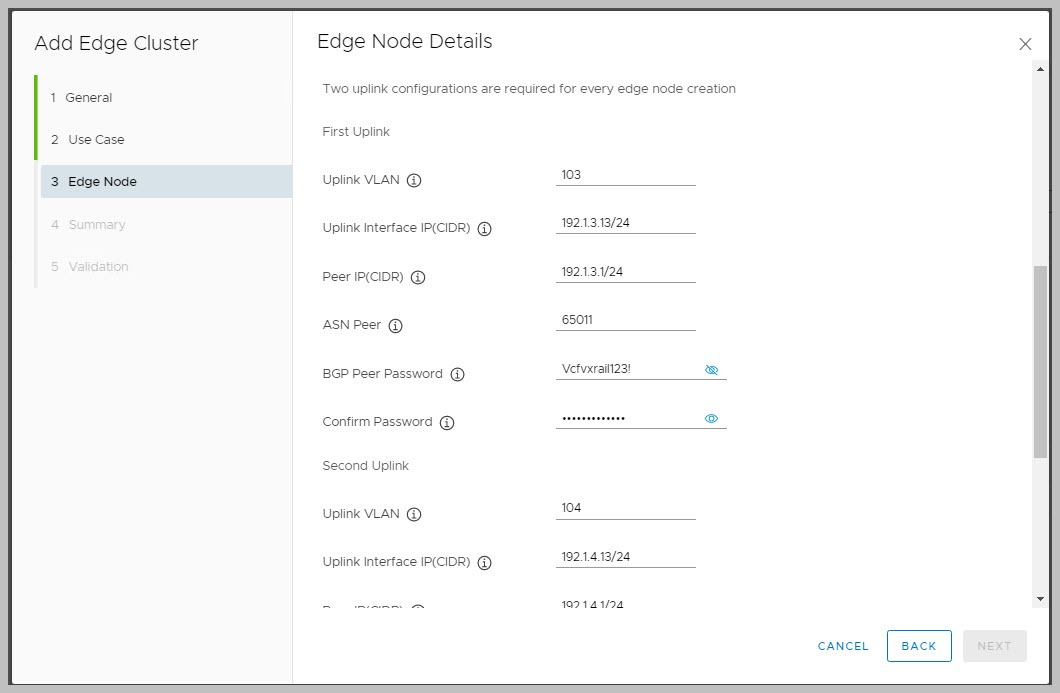

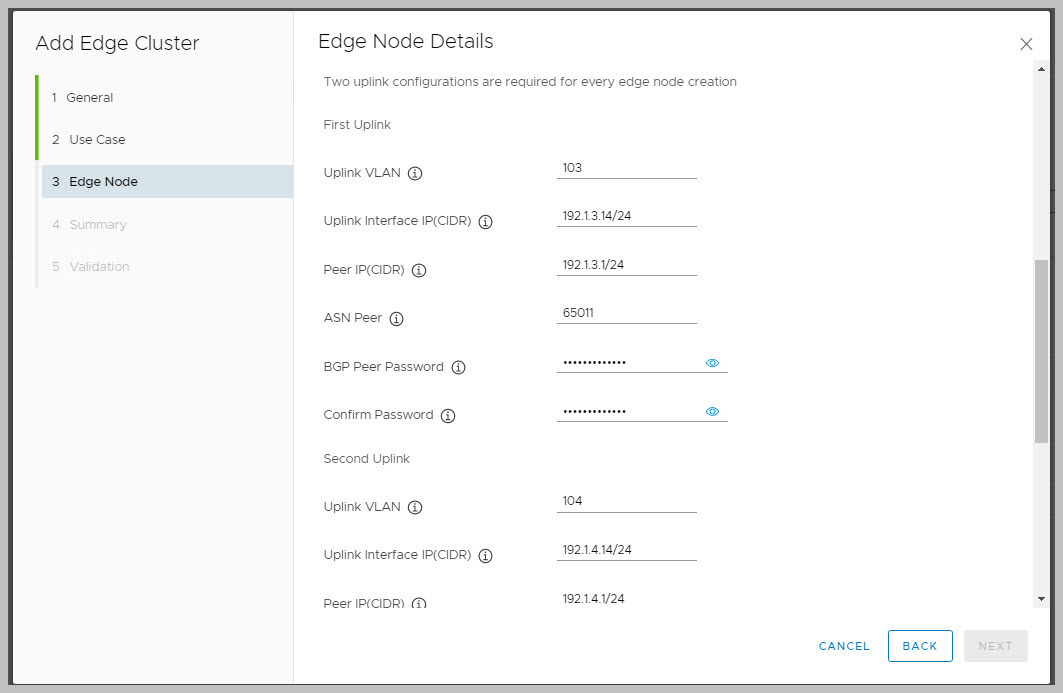

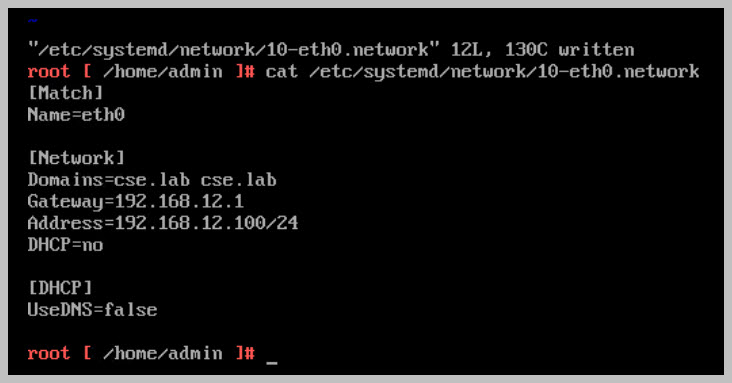

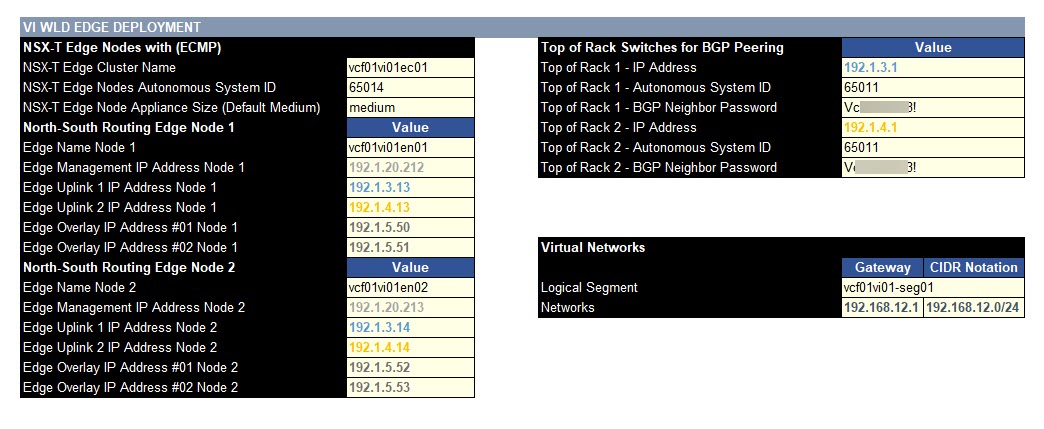

Required details for the deployment include the usual DNS entries, FQDN, IP Addresses, BGP autonomous system number (ASN) and BGP passwords:

Physical network requirements include:

• BGP is configured for each router peering.

• Two Uplink VLANs for T0 Edge external connectivity to physical network.

• One Uplink 1 VLAN on TOR/Leaf A, Uplink 2 VLAN on TOR/LeafB

• Edge Overlay VLAN is created on the both physical switches.

• Edge Overlay VLAN must be different to Host Overlay VLAN.

• Edge Overlay VLAN and Host Overlay VLAN must be routable.

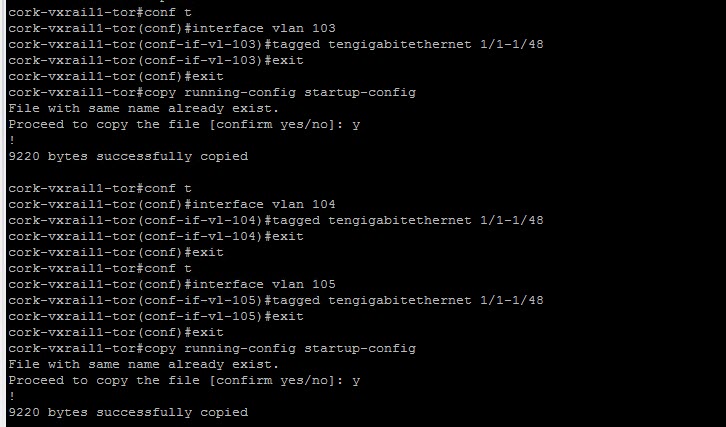

Example DELL switch configs detailing VLAN and BGP reqs:

SDDC MANAGER AUTOMATED NSX-T EDGE DEPLOYMENT STEPS

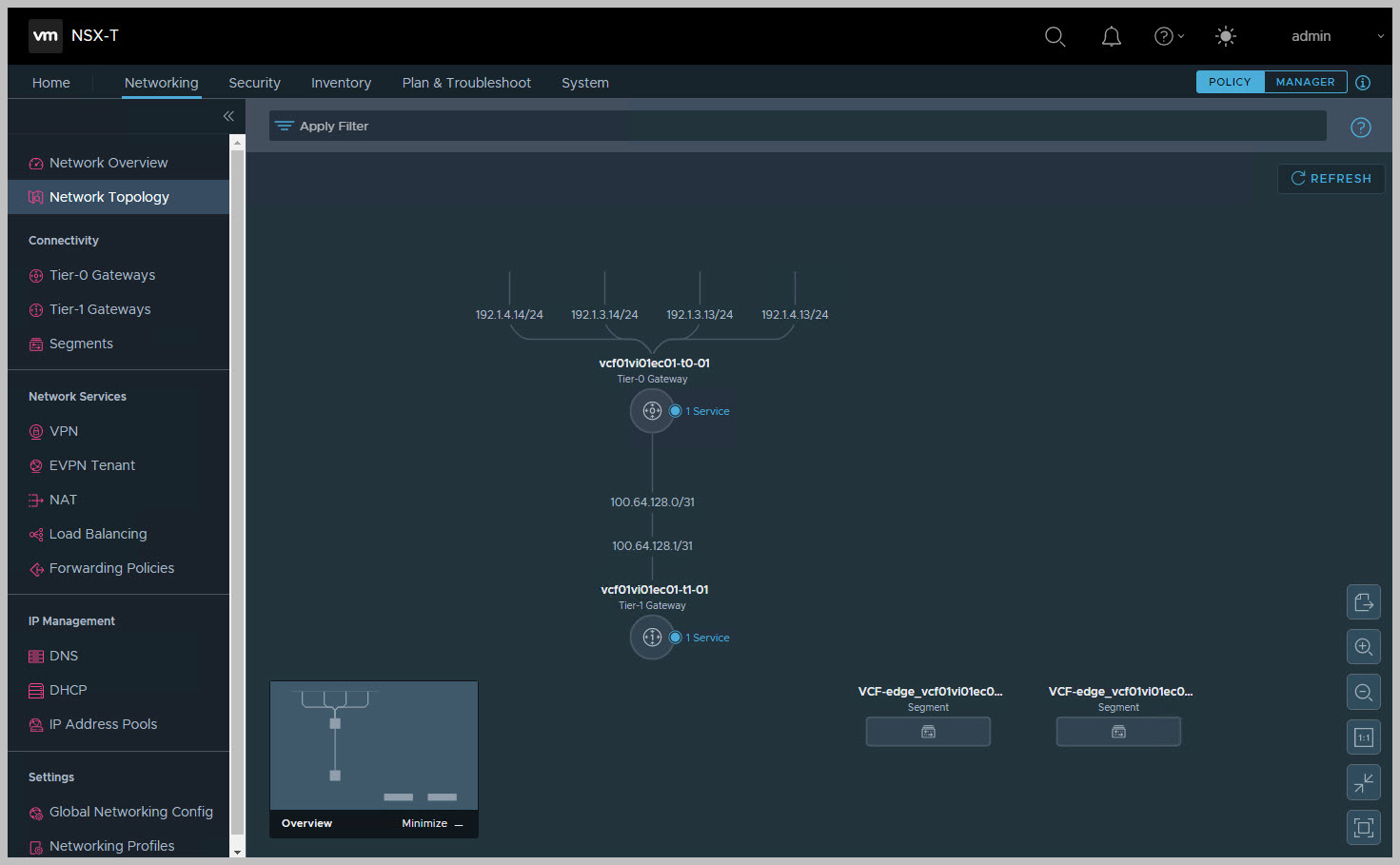

NSX-T Edge Network Topology

The following depicts the architectural view from within NSX-T manager for the Edge solution. The Tier-0 gateway provides routing to the external network while the Tier-1 connected to Tier-0 provides the N/S comms for the internal network.

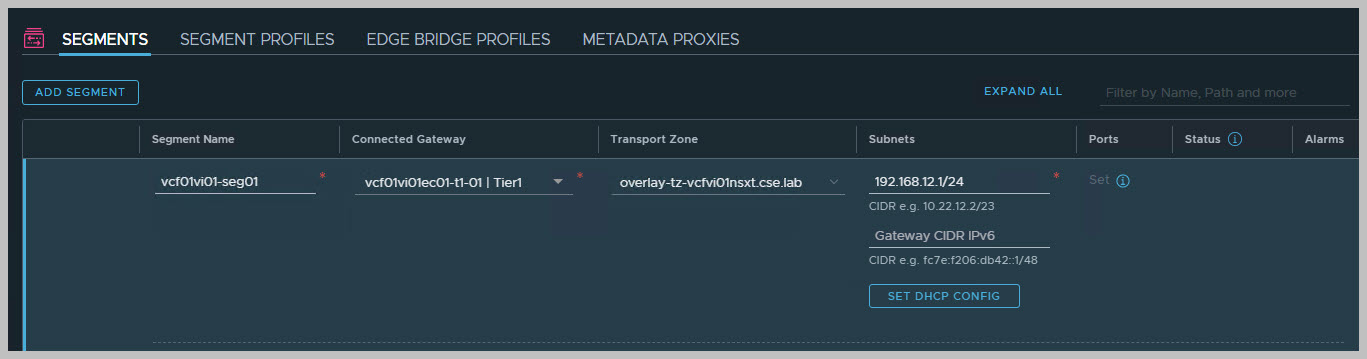

Creating An Overlay-Backed Segment

From NSX Manager select Networking > Segments and click Add Segment connecting the segment to the Tier-1 gateway:

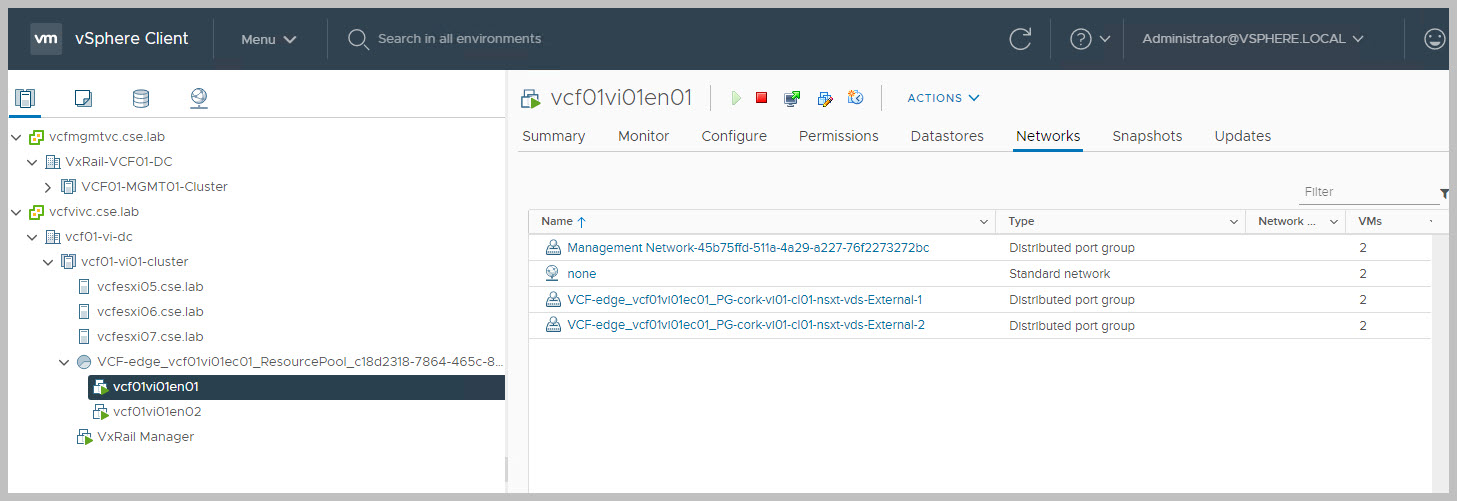

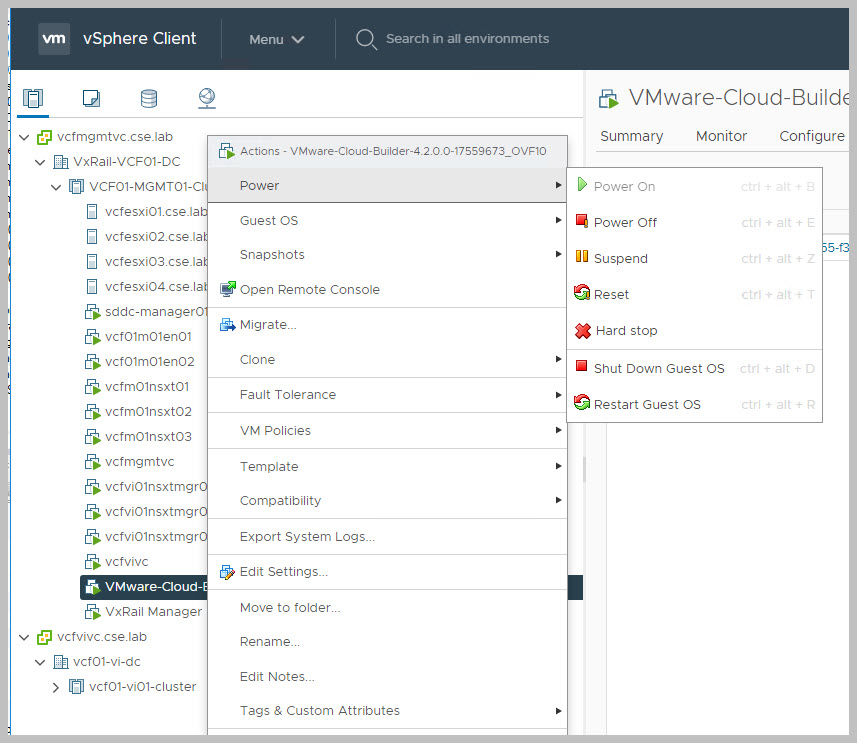

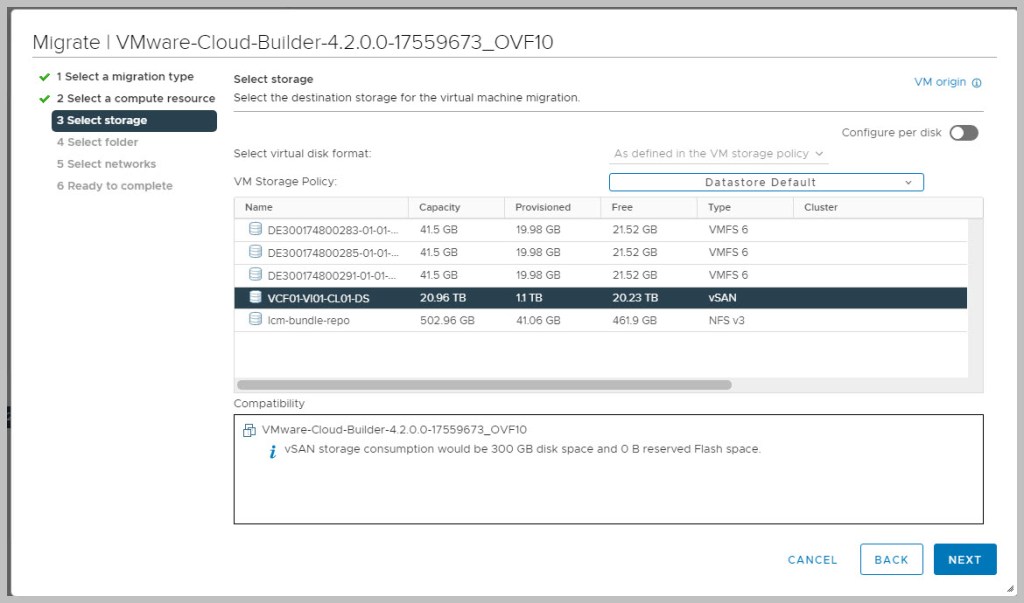

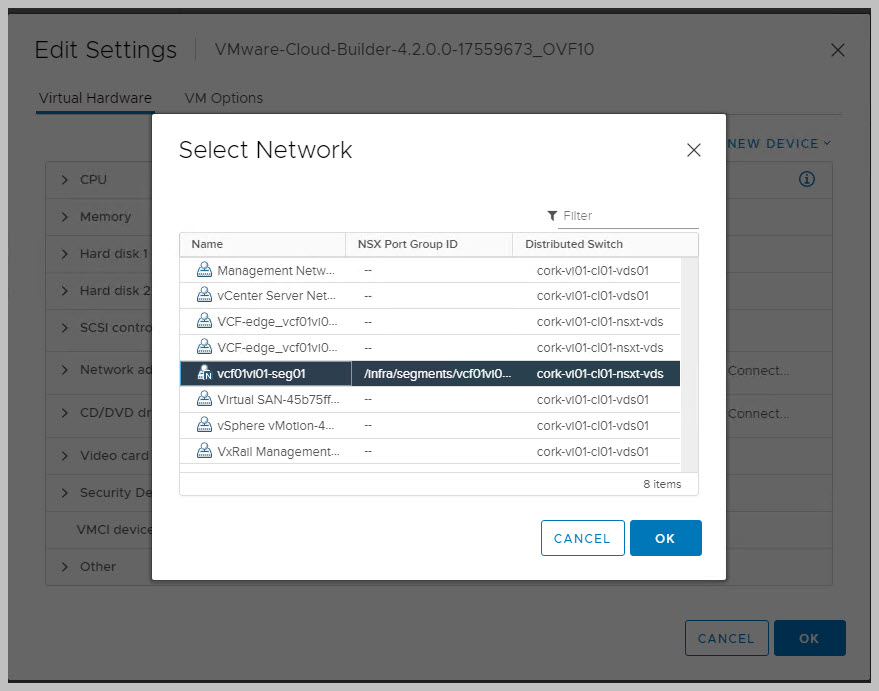

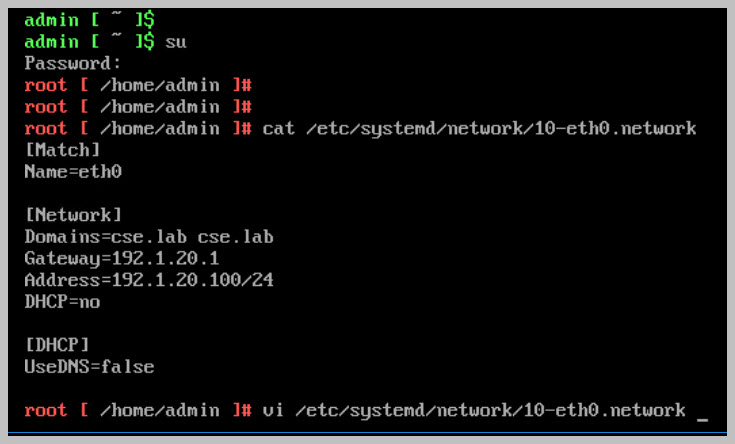

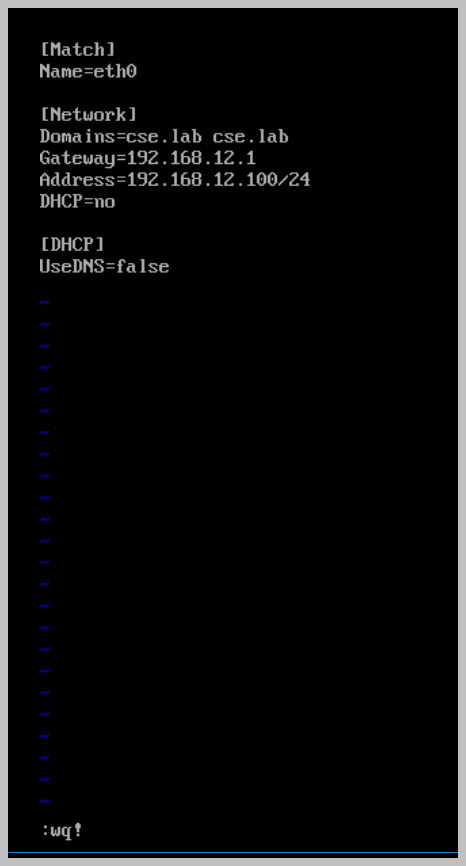

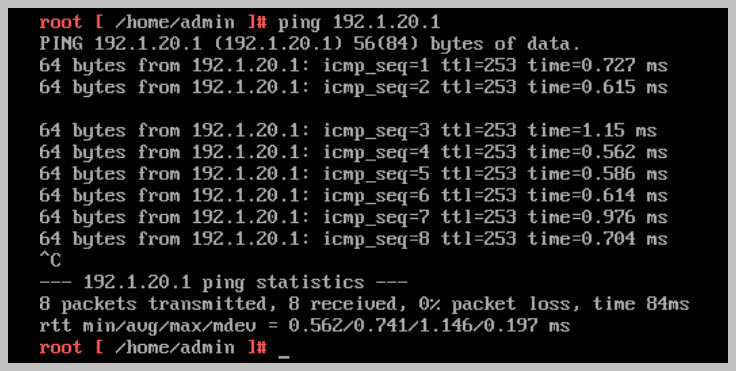

By migrating the Cloudbuilder VM from the Mgmt WLD to the VI WLD we can test the newly created overlay segment:

This completes the VCF On VXRAIL 4.2 – Walkthrough series of posts!

I hope these were of some benefit.

Thanks for reading!