VxRail – Welcome Enterprise Hybrid Cloud (EHC)

Introducing ‘EHC on VxRail’! Introduced as part of the EHC 4.1.1 release (Code named “Challenger”) – GA March ’17 Simple, Easy, Start Small, Scalable! VxRAIL HCI Appliance now has the […]

Virtualization & Storage

Introducing ‘EHC on VxRail’! Introduced as part of the EHC 4.1.1 release (Code named “Challenger”) – GA March ’17 Simple, Easy, Start Small, Scalable! VxRAIL HCI Appliance now has the […]

Introduced as part of the EHC 4.1.1 release (Code named “Challenger”) – GA March ’17

Simple, Easy, Start Small, Scalable!

VxRAIL HCI Appliance now has the option of including the ‘Enterprise Hybrid Cloud 4.1.1’ platform as part of an automated greenfield installation. Thousands of hours of engineering, designing, testing and validation has gone into this release with a laser focus on delivering an automated ‘EHC on VxRail’ onsite installation. Engineered on the VxRail P470(F) Appliances, ‘EHC on VxRail’ offers a lighter weight and smaller footprint hybrid cloud solution from where you can start small and scale exponentially.

Hardware Specification for ‘EHC on VxRAIL’

For this initial release of ‘EHC on VxRail’ the P470(F) appliances (one node per 2U chassis) running 2x(Dual Socket) Intel Broadwell processors will be the supported hardware models of choice, which covers both hybrid and all-flash variations (No mixing of hybrid and all-flash nodes/appliances). The VxRail ‘P’ Series nodes based on the Dell R730 server are high performance nodes optimized for heavy workloads: VxRail P470 (hybrid), and the VxRail P470F (all-flash). The base entry hardware spec for EHC accommodates both the EHC Management stack and a 200VM Tenant Workload environment (Note: the reference Tenant VM spec is 1 vCPU / 2GB RAM / 100GB disk / 25 IOPS). While the 200 VM example provided is an absolute minimum it is expected most configurations will we be greater as ‘EHC on VxRAIL’ can scale to the upper limits of what the VxRAIL appliance offers today, which is 64x nodes in a single ESXi cluster (greater that 32x nodes in a VxRAIL require an RPQ). Please work with your local DellEMC Cloud Specialist to leverage the VxRAIL & EHC sizing tools to correctly size your solution.

Base Minimum Hardware Specification for ‘EHC on VxRAIL’ (200 VM Entry Point):

– 4x VxRail P470(F) appliances:

-2x 10GB ToR switches (avoid a single point of failure)

-1x Dell R730 Server for DD/VE (2nd R730 is optional)

‘EHC on VxRAIL’ Support Matrix (ESSM)

The ‘EHC on VxRAIL’ Support Matrix (ESSM) draws closer synchronicity with VMware Validated Designs (VVD), with even closer ties moving forward with future EHC releases. Some of the components included in the ‘EHC on VxRAIL’ solution are as follows:

EHC Features on VxRAIL

– Infrastructure-as-a-service (IaaS)

– Backup-as-a-service (BaaS)

– Encryption-as-a-service (EaaS)

The EHC 4.1.1 release allows EHC to run on a single VxRail functioning as a single instance of EHC, which provides an on demand self-service catalog inclusive of the features highlighted.

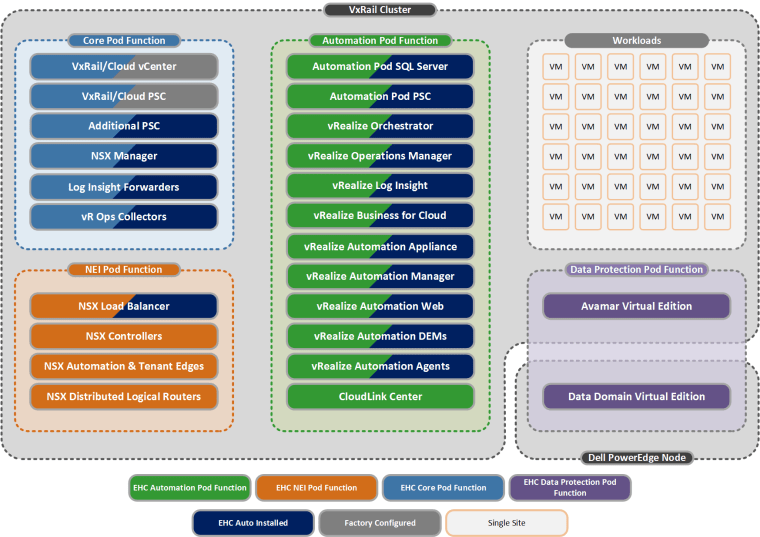

Included in this ‘EHC on VxRAIL’ release is an option to bolt-on a BaaS solution which incorporates Avamar VE, with an option to include Data Domain VE if required. Note: DDVE will be run on a separate Dell PowerEdge host as depicted by the ‘EHC on VxRail’ Architecture diagram below. Encryption-as-a-Service is also included which utilises CloudLink SecureVM. STaaS functions do not apply in an ‘EHC on VxRail’ solution as the vSAN storage is fully pre-provisioned. Future releases will introduce multi-site managed remote endpoints and DRaaS solutions leveraging technologies such as RP4VM (until multi-site is introduced additional VxRAIL Appliances can be added but only as infrastructure-as-a-service vCenter endpoints). The supported cluster type for ‘EHC on VxRAIL’ 4.1.1 is as follows: ‘VS1S’ – vSAN Storage on One Site. And the supported EHC BaaS backup type is ‘1C1VC’ – 1 Copy 1 vCenter mode supported for single site configurations today with replication targeted for a future release, please reference the EHC Concepts & Architecture Guide for greater detail.

‘EHC on VxRAIL’ Architecture

The ‘EHC on VxRAIL’ Management Pod functions (Core, NEI & Automation) and Tenant Pod Workloads reside on the VxRAIL Appliance cluster and are separated by using Resource Pools.

The Architecture image used in this post was taken from the EHC Architecture Guide, for more detailed architectural explanations please refer to the following EHC 4.1.1 Architecture Guides:

EHC Reference Architecture Guide

EHC Concepts and Architecture Guide

https://community.emc.com/community/connect/everything-cloud

http://www.emc.com/collateral/technical-documentation/h15830r-ehc-4-1-1-admin-guide.pdf

1 Comment »