EMC VNX – FAST CACHE

EMC ‘FAST Cache’ technology gives a performance enhancement to the VNX Storage Array by adding FLASH drives as a Secondary Cache, working hand-in-hand with DRAM Cache and enhancing overall Storage […]

Virtualization & Storage

EMC ‘FAST Cache’ technology gives a performance enhancement to the VNX Storage Array by adding FLASH drives as a Secondary Cache, working hand-in-hand with DRAM Cache and enhancing overall Storage […]

EMC ‘FAST Cache’ technology gives a performance enhancement to the VNX Storage Array by adding FLASH drives as a Secondary Cache, working hand-in-hand with DRAM Cache and enhancing overall Storage Array performance. EMC recommend firstly using available Flash Drives for FAST Cache and then adding Flash Drives as a tier to selected FAST VP pools as required. FAST Cache works with all VNX systems (And also CX4) and is activated by installing the required FAST Cache Enabler. FAST Cache works with traditional ‘Flare LUNs’ and ‘VP Pools’.

Note: FAST Cache is enabled at the Pool wide level and cannot be selective for specific LUNs within the Pool.

The initial configuration is quite simple, after adding the required quantity of drives you can create FAST Cache through the ‘System Properties’ console in Unisphere, which will enable FAST Cache for system wide use:

Or you can use Navicli commands to create and monitor the initialization status of FAST Cache:

naviseccli -h SPA_IP cache -fast -create –disks disks -mode rw -rtype r_1

Check on the status of FAST Cache creation:

naviseccli -h SPA_IP cache -fast -info -status

If you wish to enable|disable FAST Cache on a specific VP_POOL:

naviseccli -h SPA_IP storagepool -modify -name “Pool_Name” -fastcache off|on

Check what Pools have FAST Cache enabled/disabled:

naviseccli -h SPA_IP storagepool -list -fastcache

If the FAST Cache configuration requires any modification then it first needs to be disabled. By disabling (destroying) FAST Cache all dirty blocks are flushed back to disk; once FAST Cache has completed disabling then you may re-create FAST Cache with your new configuration.

Configuration Options

FAST Cache configuration options range from 100GB on a CX4-120 to 4.2TB of FAST Cache on a VNX-8000.

CX4 Systems:

CX4-120 – 100GB

CX4-240 – 200GB

CX4-480 – 800GB

CX4-960 – 2000GB

VNX1 Systems:

VNX 5100 – 100GB

VNX 5300 – 500GB

VNX 5500 – 1000GB

VNX 5700 – 1500GB

VNX 7500 – 2100GB

VNX2 Systems:

VNX 5200 – 600GB

VNX 5400 – 1000GB

VNX 5600 – 2000GB

VNX 5800 – 3000GB

VNX 7600 – 4200GB

VNX 8000 – 4200GB

FAST Cache drives are configured as RAID-1 mirrors and it is good practice to balance the drives across all available back-end buses; this is due to the fact that FAST Cache drives are extremely I/O Intensive and placing more than the recommended maximum per Bus may cause I/O saturation on the Bus. Amount of FAST Cache drives per B/E Bus differs for each system but ideally for a CX/VNX1 system aim for no more than 4 drives per bus and 8 for a VNX2. It is best practice on a CX/VNX1 to avoid placing drives on the DPE or 0_0 that will result in one of the drives being placed in another DAE, for example DO NOT mirror a drive in 0_0 with a drive in 1_0.

The order the drives are added into FAST Cache is the order in which they are bound, with the:

first drive being the first Primary;

the second drive being the first Secondary;

the third drive being the next Primary and so on…

You can view the internal private RAID_1 Groups of FAST Cache by running the following Navicli:

naviseccli -h SPA_IP getrg –EngineeringPassword

Note: Do not mix different drive capacity sizes for FAST Cache, either use all 100GB or all 200GB drive types. Also for VNX2 systems there are two types of SSD available:

• FAST Cache SSDs are single-level cell (SLC) Flash drives that are targeted for use with FAST Cache. These drives are available in 100GB and 200GB capacities and can be used both as FAST Cache and as TIER-1 drives in a storage pool.

• FAST VP SSDs are enterprise Multi-level cell (eMLC) drives that are targeted for use as TIER-1 drives in a storage pool (Not supported as ‘FAST Cache’ drives). They are available in three flavors 100GB, 200GB and 400GB.

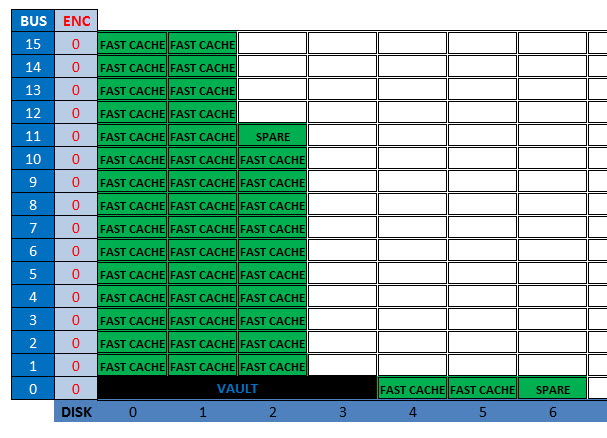

Example ‘FAST Cache’ Drive Layout (VNX 8000)

The following image is an example of a VNX-8000 with the maximum allowed FAST Cache configuration (42 x 200GB FAST Cache SSDs). As you can see I have spread the FAST Cache drives as evenly possible across the BE Buses beginning with DAE0_0 in order to achieve the lowest latency possible:

FAST Cache Internals

FAST Cache is built on the ‘Unified LUN’ technology; thus the Data in FAST Cache is as secure as any other LUN in the CX/VNX array. FAST Cache is a nonvolatile storage that survives both power and SP failures and it does not have to re-warm after a power outage either.

There will be a certain amount of DRAM allocated during FAST Cache creation for the IO tracking of FAST Cache known as the ‘Memory Map’. This FAST Cache bitmap is directly proportional to the size of the FAST Cache. The memory allocation is something in the region of 1MB for every 1GB of FAST Cache created. So when FAST Cache is being enabled FLARE will attempt to grab approx 1/3rd the required memory from Read cache and 2/3rds from the Write cache and then re-adjusts the existing DRAM read and write caches accordingly.

With a compatible workload; FAST Cache increases performance by reducing the response time to hosts and provides higher throughput (IOPS) for busy areas that may be under pressure from drive or RAID limitations. Apart from ‘Storage Processors’ being able to cache read and write I/Os; the Storage Processors on the VNX also coalesce writes and pre-fetch reads to improve performance. However, these operations generally do not accelerate random read-heavy I/Os and this is where FAST Cache helps. FAST Cache monitors the storage processors’ I/O activity for blocks that are read or written to multiple times, with the third IO to any block within a 64K extent getting scheduled for promotion to FAST Cache, promotion is handled the same way as writing or reading an IO to a LUN. The migration process operates 24×7 using the ‘Least Recently Used algorithm’ in order to determine which data stays and which goes. The writes continue to be written to DRAM write cache but with FAST Cache enabled those writes are flushed to the Flash drives and so increasing flush speeds.

One important thing to note is that while performance of the VNX increases and IOPS figures increase with workload demands there will be an increase in the SP CPU utilization and this should be monitored. There are recommended guidelines on max throughput figures for particular arrays… more on this later.

It is important to know the type of workload on your LUN; as an example, log files are generally written and read sequentially across the whole LUN, in this scenario the LUN would not be a good candidate for FAST Cache as Flash drives are not necessarily better at serving large block sequential I/O when compared to spinning drives. Also Large block sequential I/O workloads are better served by having large quantities of drives, promoting this type of data to FAST Cache will normally result in the data being served by a lesser quantity of drives thus resulting in a performance reduction. Avoiding using FAST Cache on unsuitable LUNs will help to reduce the overhead of tracking I/O for promotion to FAST Cache.

Best Suited

Here I have listed conditions that you should factor when deciding if FAST Cache will be a good fit for your environment:

• VNX Storage Processor Utilization is under 70-percent

• There is evidence of regular forced Write Cache Flushing

• The majority I/O block size is under 64K (OLTP Transactions are typically 8K)

• The disk utilization of RAID Groups is consistently running above 60-70%

• Your workload is predominately random read I/O

• Your production LUNs have a high percentage of read cache misses

• Host response times are unacceptable

Useful EMC KB’s

Please see the following EMC KB articles for further reference (support.emc.com):

Please Note that some guidelines apply differently to Clar|VNX|VNX2.

KB 73184: Rules of thumb for configuring FAST Cache for best performance and highest availability

KB 14561: How to flush and disable FAST Cache

KB 15075: FAST Cache performance considerations

KB 82823: Should FAST Cache be configured across enclosures?

KB 15606: How to monitor FAST Cache performance using Unisphere Analyzer

KB 168348: [Q&A]VNX Fast Cache

KB 84362: Where do I find information about FAST Cache?

Excellent Posts!!!! Keep up the great work and add me to your mailing lists for new posts etc. Much appreciated.

Excellent Posts!!!! Keep up the great work and add me to your mailing lists for new posts etc. Much appreciated

Amazing post David…Keep up the great work and add me to your mailing lists for new posts thanks in advance…rkiran83@gmail.com