A Quantum Leap in Hyper-Converged Appliances

EMC|VCE and VMware have added a Hyper-Converged Infrastructure Appliance (HCIA) offering named VxRail to the existing CI portfolio of Vblocks, VxBlocks, VxRacks (Blocks,Racks,Appliances).

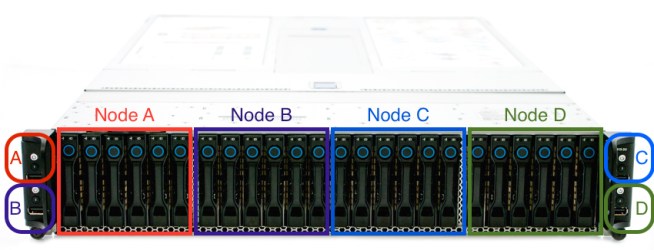

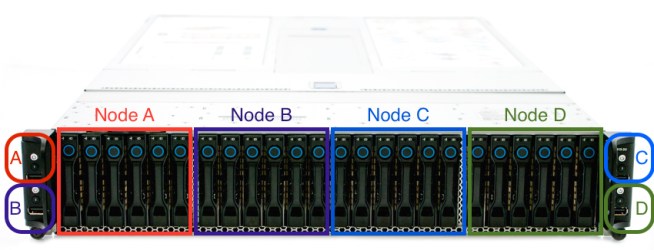

VxRail is built on a modular scale out clustering architecture that comprises of appliances (Base building block) where each appliance can house up to 4x industry standard x86 hardware compute nodes (four independent ESXi hosts) inclusive of storage. The appliance has a small footprint which is consuming only two rack units ‘2U’ in height, thus we will use the term ‘2U4N’ as abbreviation for the rack space and compute node count within the appliance. With the initial launch (Q1 2016) VxRail will have the ability to scale up to 8x appliances allowing for a total of 32x compute nodes (the Q2 VxRail release will allow for twice these scaling counts allowing for up to 16x Appliances resulting in a max total of 64 nodes in a VxRail cluster).

VxRail is built on a modular scale out clustering architecture that comprises of appliances (Base building block) where each appliance can house up to 4x industry standard x86 hardware compute nodes (four independent ESXi hosts) inclusive of storage. The appliance has a small footprint which is consuming only two rack units ‘2U’ in height, thus we will use the term ‘2U4N’ as abbreviation for the rack space and compute node count within the appliance. With the initial launch (Q1 2016) VxRail will have the ability to scale up to 8x appliances allowing for a total of 32x compute nodes (the Q2 VxRail release will allow for twice these scaling counts allowing for up to 16x Appliances resulting in a max total of 64 nodes in a VxRail cluster).

The entire system is managed by a central management single pane of glass console referred to as the ‘VxRail Manager’ (incorporating vCenter Server and vCenter Log Insight). This wizard-driven management system allows the user to easily manage the entire system from a single console allowing the user to execute functions ranging from power-On/Off, orchestrated provisioning tasks (specifying VM predefined t-shirt sizes and attributes such as OS,VLAN,security etc.) applying non-disruptive patches and upgrades, while the inclusion of VCE Vision Intelligent Operations will cater for centralised monitoring inclusive of remote collection and reporting on hardware and software configurations of a VxRail from an extended centralised infrastructure residing on Vblock/VxBlock (VCE Vision 3.3.0 introduces support for the VxRail Appliance.)

This entry level scalable designed approach means VxRail is an ideal candidate for Small-Mid enterprise DC’s, for remote office branch office (ROBO) or enterprise edge scenarios. Appliances can be added to an existing cluster seamlessly and resources are rebalanced throughout the cluster resulting in a single pool of resources. The software stack collectively developed by EMC,VCE and VMware comprises of VMware vSphere (vSphere HA, vMotion & DRS as standard) with Virtual SAN (VSAN) and EMC software including an option for RP4VM’s to provide VM level granular disaster recovery. In Q1 as part of the initial release the VxRail Appliance will ship with VSAN 6.1 and in Q2 a non-disruptive upgrade will be made available to VSAN 6.2. The Q2 upgrade to VMware VSAN 6.2 will coincide with the addition of the All-Flash VxRail models which as a result of the VSAN 6.2 upgrade will offer enterprise ready services such as Deduplication & Compression (both are enabled together cluster wide), and Erasure Coding (RAID-5/RAID-6 Support) these services will be available with the All-Flash VSAN. More info on the new features of VSAN 6.2 can be found here @CormacJHogan‘s blog.

Used as an endpoint in an ‘Enterprise Hybrid Cloud (EHC)’ solution VxRail can be registered with the vRealize Automation Service Catalog thus extending the EHC solution to offer IaaS and/or Application services to your Edge locations just by adding VxRail as an additional vCenter endpoint.

As standard with all EMC CI – single call 24/7 support for both hardware and software, dial home capability with the use of EMC Secure Remote Services (ESRS). Ease of node maintenance – vMotion the workload off the node, remove the node from the appliance and repair/replace all non-disruptively. Both the disks and PSU are customer replaceable units (CRU).

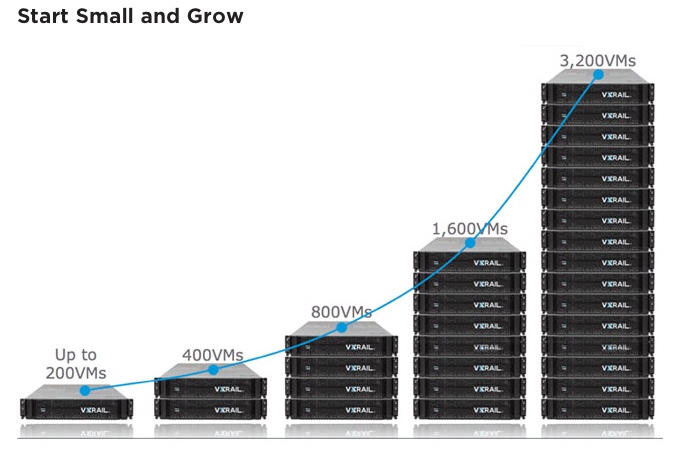

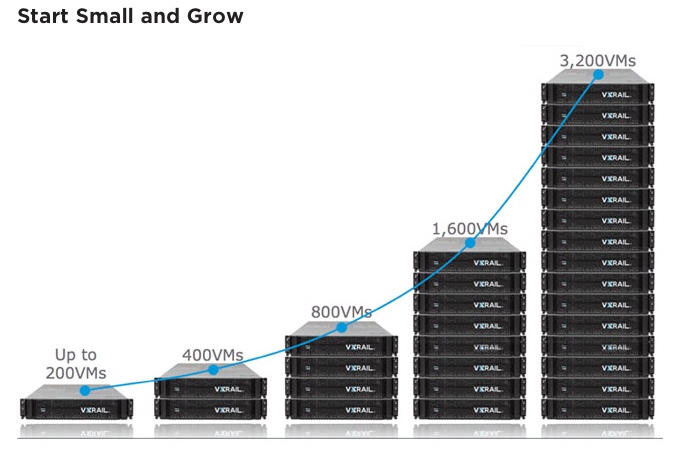

So the message is clear: VxRail is simple to deploy, easy to manage, starts small but can scale quite easily (40 VMs to 3,200 VMs) by adding Appliances/Nodes (Single Node increments with Q2 release).

A view of the VxRail 4-Node Appliance Chassis

Front view of Appliance (Storage in front)

Note: the drive allocation is on a per node basis with Node A being allocated the first 6 drives…

Rear view of Appliance (Compute in back)

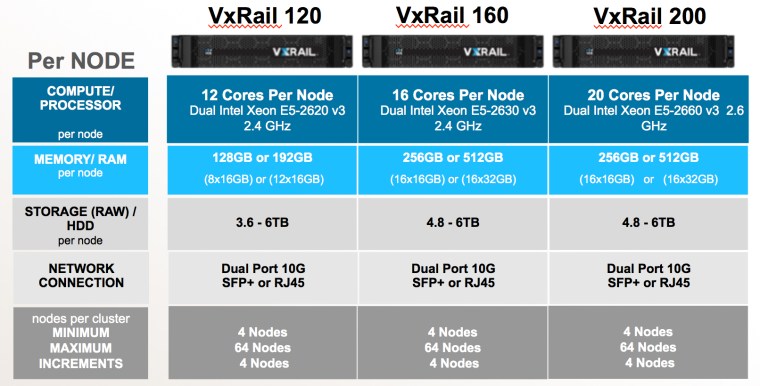

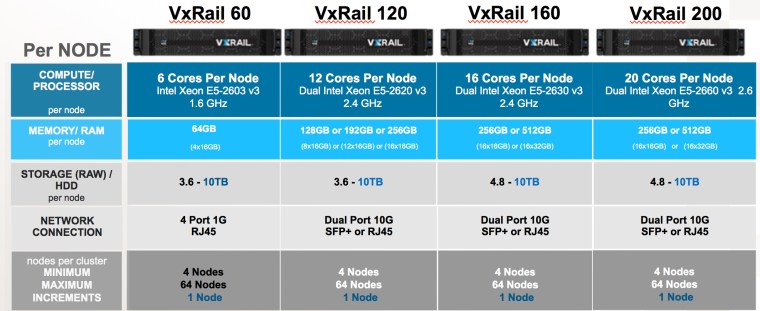

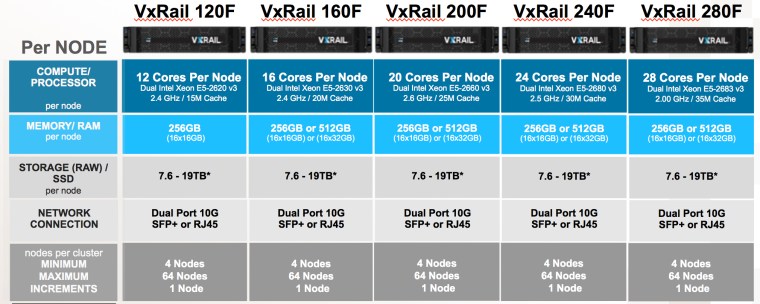

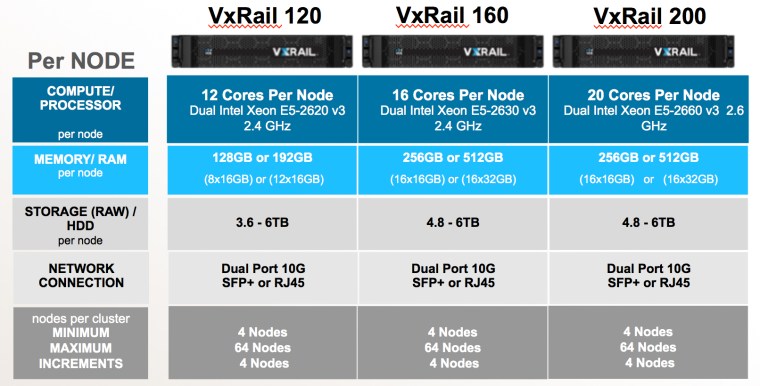

Introducing The VxRAIL Models: ‘Hybrid’ & ‘All-Flash’

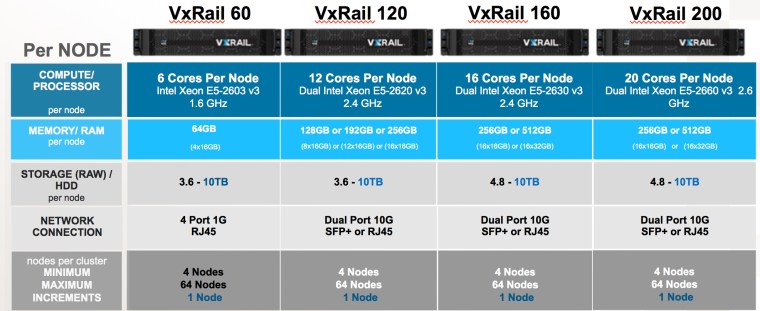

The VxRail Appliance offers multiple configuration options across two appliance types ‘Hybrid’ or ‘All-Flash’ with various models of each 4x Hybrid & 5x All-Flash. The models come with various configuration options for each model in respect to compute, memory, storage and network to cater for a wide range of workloads.

Hybrid Models and their respective configurations which will be available with the initial VxRail Q1 release scale from 48 Cores per 4x node appliance and up to 768GB RAM with the ‘VxRail 120’ model appliance, while at the higher end of the Hybrid scale a ‘VxRail 200’ appliance has the potential to cater for up to 80 Cores and 2TB of RAM. Thus at a maximum fully populated Hybrid configuration utilising all ‘VxRAIL 200’ model appliances, a 64 node cluster can cater for 1,280 Cores, 32TB RAM and 384TB of raw storage. Each Hybrid node disk group configuration will have at least 1 cache device (SSD).

The Hybrid models and their respective configurations will have additional enhancements which will be made available during the Q2 timeframe as detailed in the following chart. Note the addition of an even lower entry point ‘VxRail 60’ model configuration starting with a single socket including 6 Cores, 64GB RAM and the storage capacity per Hybrid node increases from 6TB to 10TB (640TB Q2 Release). In addition there will be an option for single node scaling which adds greater flexibility in terms of upgrades and scalability.

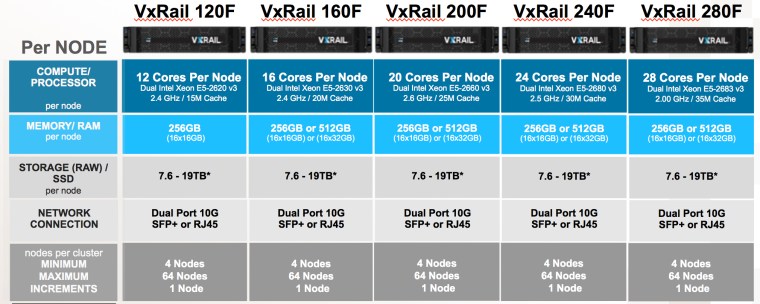

VxRail All-Flash models and their respective configurations which will be available as part of the Q2 release:

This makes for a staggering All-Flash VxRail 4x node appliance which can scale to 76TB (19TB raw storage (SSD) PER NODE) and 112 Cores all enclosed a ‘2U4N’ footprint! Sixteen ‘VxRail 280F’ appliances in a single cluster can run up to 3200 VMs with ~1.2PB of all-flash raw capacity providing incredible low latency and extreme performance.

One additional point to note is with respect to mixing differing model types within the same cluster – this degree of flexibility is allowed provided you do not mix Hybrid and All-Flash nodes within the same cluster as it would only result in an unbalanced configuration from a performance stand point.

Useful Links:

VxRail is built on a modular scale out clustering architecture that comprises of appliances (Base building block) where each appliance can house up to 4x industry standard x86 hardware compute nodes (four independent ESXi hosts) inclusive of storage. The appliance has a small footprint which is consuming only two rack units ‘2U’ in height, thus we will use the term ‘2U4N’ as abbreviation for the rack space and compute node count within the appliance. With the initial launch (Q1 2016) VxRail will have the ability to scale up to 8x appliances allowing for a total of 32x compute nodes (the Q2 VxRail release will allow for twice these scaling counts allowing for up to 16x Appliances resulting in a max total of

VxRail is built on a modular scale out clustering architecture that comprises of appliances (Base building block) where each appliance can house up to 4x industry standard x86 hardware compute nodes (four independent ESXi hosts) inclusive of storage. The appliance has a small footprint which is consuming only two rack units ‘2U’ in height, thus we will use the term ‘2U4N’ as abbreviation for the rack space and compute node count within the appliance. With the initial launch (Q1 2016) VxRail will have the ability to scale up to 8x appliances allowing for a total of 32x compute nodes (the Q2 VxRail release will allow for twice these scaling counts allowing for up to 16x Appliances resulting in a max total of

David,

Thanks for the detailed information.Personally i felt VxRail has features of Vspex Blue & VxRack..I also heard that Evo rail from vmware is obsolete ..May be my expectations from the hyperconverged appliance was more that i dint find vxrail as something really exciting..waiting for the Q2 Announcements..Thanks again for the detailed overview..

Hey… Thanks for very useful information…

It would be great if you discuss about backups and restore of these 3200 VMS?.